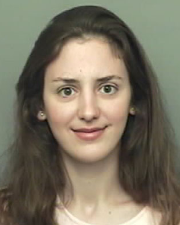

Daisy Stanton

Research Areas

Authored Publications

Sort By

Generative semi-supervised learning with a neural seq2seq noisy channel

Siyuan Ma

ICML Workshop on Structured Probabilistic Inference (2023)

Preview abstract

We present a noisy channel generative model of two sequences, for example text and speech, which enables uncovering the associations between the two modalities when limited paired data is available. To address the intractability of the exact model under a realistic data set-up, we propose a variational inference approximation. To train this variational model with categorical data, we propose a KL encoder loss approach which has connections to the wake-sleep algorithm. Identifying the joint or conditional distributions by only observing unpaired samples from the marginals is only possible under certain structure in the data distribution and we discuss under what type of conditional independence assumptions that might be achieved, which guides the architecture designs. Experimental results show that even tiny amount of paired data is sufficient to learn to relate the two modalities (graphemes and phonemes here) when loads of unpaired data is available, paving the path to adopting this principled approach for ASR and TTS models in low resource data regimes.

View details

Preview abstract

This work explores the task of synthesizing speech in human-sounding voices unseen in any training set. We call this task "speaker generation", and present TacoSpawn, a system that performs competitively at this task. TacoSpawn is a deep generative text-to-speech model that learns a distribution over a speaker embedding space, which enables sampling of novel and diverse speakers. Our method is easy to implement, and does not require transfer learning from speaker ID systems. We present objective and subjective metrics for evaluating performance on this task, and demonstrate that our proposed objective metrics correlate with human perception of speaker similarity.

View details

Preview abstract

Despite the ability to produce human-level speech for in-domain text, attention-based end-to-end text-to-speech (TTS) systems suffer from text alignment failures that increase in frequency for out-of-domain text. We show that these failures can be addressed using simple location-relative attention mechanisms that do away with content-based query/key comparisons. We compare two families of attention mechanisms: location-relative GMM-based mechanisms and additive energy-based mechanisms. We suggest simple modifications to GMM-based attention that allow it to align quickly and consistently during training, and introduce a new location-relative attention mechanism to the additive energy-based family, called Dynamic Convolution Attention (DCA). We compare the various mechanisms in terms of alignment speed and consistency during training, naturalness, and ability to generalize to long utterances, and conclude that GMM attention and DCA can generalize to very long utterances, while preserving naturalness for shorter, in-domain utterances.

View details

Preview abstract

Recent work has explored sequence-to-sequence latent variable models for expressive speech synthesis (supporting control and transfer of prosody and style), but has not presented a coherent framework for understanding the trade-offs between the competing methods. In this paper, we propose embedding capacity (the amount of information the embedding contains about the data) as a unified method of analyzing the behavior of latent variable models of speech, comparing existing heuristic (non-variational) methods to variational methods that are able to explicitly constrain capacity using an upper bound on representational mutual information. In our proposed model (Capacitron), we show that by adding conditional dependencies to the variational posterior such that it matches the form of the true posterior, the same model can be used for high-precision prosody transfer, text-agnostic style transfer, and generation of natural-sounding prior samples. For multi-speaker models, Capacitron is able to preserve target speaker identity during inter-speaker prosody transfer and when drawing samples from the latent prior. Lastly, we introduce a method for decomposing embedding capacity hierarchically across two sets of latents, allowing a portion of the latent variability to be specified and the remaining variability sampled from a learned prior. Audio examples are available on the web.

View details

Semi-Supervised Generative Modeling for Controllable Speech Synthesis

Raza Habib

ICLR (2019)

Preview abstract

We present a novel generative model that combines state-of-the-art neural text-to-speech (TTS) with semi-supervised probabilistic latent variable models. By providing partial supervision to some of the latent variables, we are able to force them to take on consistent and interpretable purposes, which previously hasn't been possible with purely unsupervised methods. We demonstrate that our model is able to reliably discover and control important but rarely labelled attributes of speech, such as affect and speaking rate, with as little as 0.5\% (15 minutes) supervision. Even at such low supervision levels we do not observe a degradation of synthesis quality compared to a state-of-the-art baseline.

View details

Towards End-to-End Prosody Transfer for Expressive Speech Synthesis with Tacotron

Ying Xiao

Yuxuan Wang

Joel Shor

Rob Clark

International Conference on Machine Learning (2018)

Preview abstract

We present an extension to the Tacotron speech synthesis architecture that learns a latent embedding space of prosody, derived from a reference acoustic representation containing the desired prosody. We show that conditioning Tacotron on this learned embedding space results in synthesized audio that matches the reference signal’s prosody with fine time detail. We define several quantitative and subjective metrics for evaluating prosody transfer, and report results and audio samples from a single-speaker and 44-speaker Tacotron model on a prosody transfer task.

View details

Style Tokens: Unsupervised Style Modeling, Control and Transfer in End-to-End Speech Synthesis

Yuxuan Wang

Yu Zhang

Joel Shor

Ying Xiao

Fei Ren

Ye Jia

ICML (2018)

Preview abstract

In this work, we propose “global style tokens”(GSTs), a bank of embeddings that are jointly trained within Tacotron, a state-of-the-art end-to-end speech synthesis system. The embeddings are trained in a completely unsupervised manner, and yet learn to model a large range of acoustic expressiveness. GSTs lead to a rich set of surprising results. The soft interpretable “labels” they generate can be used to control synthesis in novel ways, such as varying speed and modifying speak-ing style – independently of the text content. The labels can also be used for style transfer, replicating the speaking style of one “seed” phrase across an entire long-form text corpus. Perhaps most surprisingly, when trained on noisy, unlabelled found data, GSTs learn to factorize noise and speaker identity, providing a path towards highly scaleable but robust speech synthesis.

View details

Uncovering Latent Style Factors for Expressive Speech Synthesis

Yuxuan Wang

Ying Xiao

Joel Shor

Rob Clark

NIPS Workshop on Machine Learning for Audio Signal Processing (ML4Audio) (2017) (to appear)

Preview abstract

Prosodic modeling is a core problem in speech synthesis. The key challenge is producing desirable prosody from textual input containing only phonetic information. In this preliminary study, we introduce the concept of "style tokens" in Tacotron, a recently proposed end-to-end neural speech synthesis model. Using style tokens, we aim to extract independent prosodic styles from training data. We show that without annotation data or an explicit supervision signal, our approach can automatically learn a variety of prosodic variations in a purely data-driven way. Importantly, each style token corresponds to a fixed style factor regardless of the given text sequence. As a result, we can control the prosodic style of synthetic speech in a somewhat predictable and globally consistent way.

View details

Tacotron: Towards End-to-End Speech Synthesis

Yuxuan Wang

Yonghui Wu

Navdeep Jaitly

Zongheng Yang

Ying Xiao

Zhifeng Chen

Samy Bengio

Yannis Agiomyrgiannakis

Rob Clark

Interspeech (2017)

Preview abstract

A text-to-speech synthesis system typically consists of multiple stages, such as a text analysis frontend, an acoustic model and an audio synthesis module. Building these components often requires extensive domain expertise and may contain brittle design choices. In this paper, we present Tacotron, an end-to-end generative text-to-speech model that synthesizes speech directly from characters. Given (text, audio) pairs, the model can be trained completely from scratch with random initialization. We present several key techniques to make the sequence-to-sequence framework perform well for this challenging task. Tacotron achieves a 3.82 subjective 5-scale mean opinion score on US English, outperforming a production parametric system in terms of naturalness. In addition, since Tacotron generates speech at the frame level, it's substantially faster than sample-level autoregressive methods.

View details