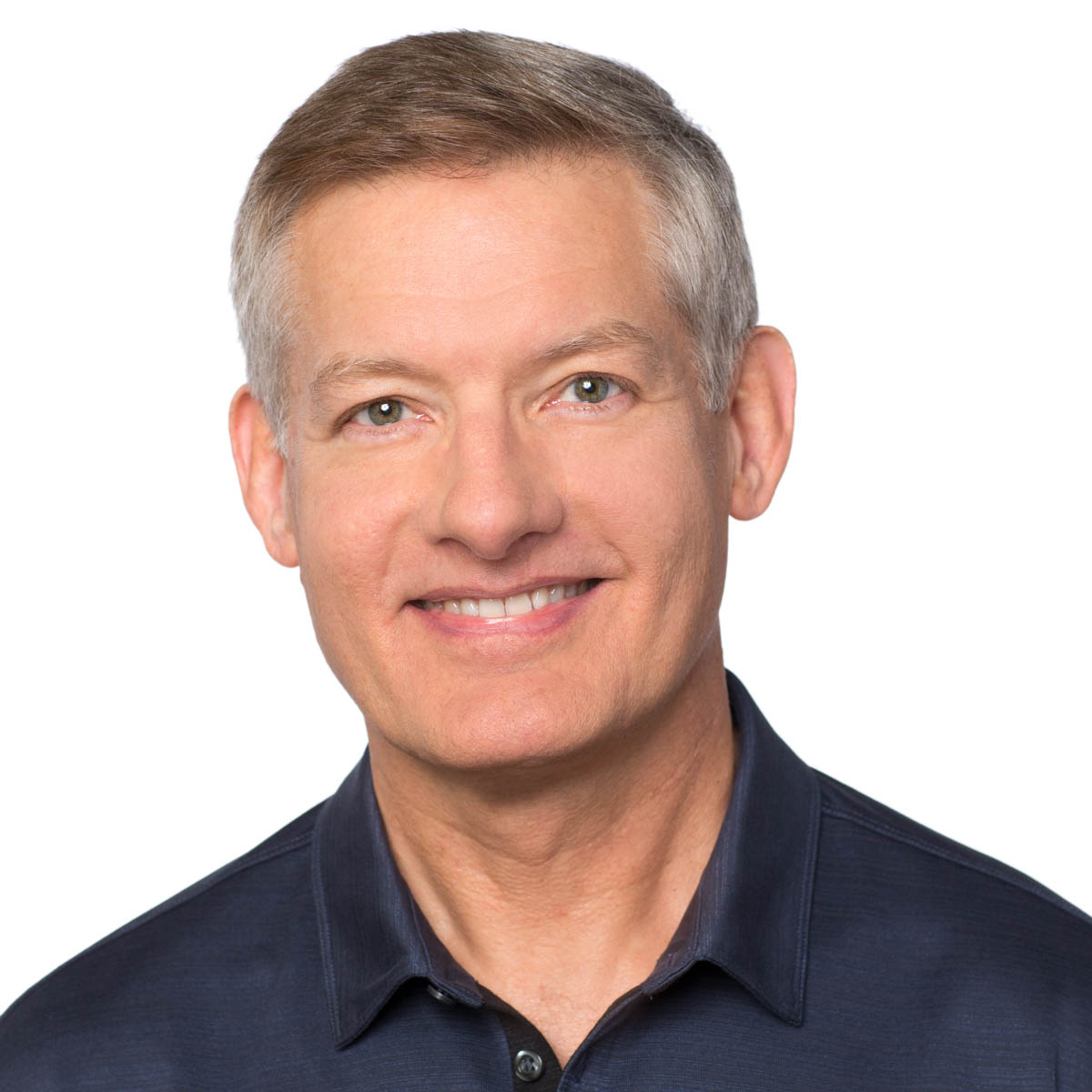

Art Pope

Art Pope received BS and PhD degrees from the University of British Columbia, and an SM from Harvard, all in computer science. He is a software engineer at Google in Mountain View, CA. Prior to joining Google in 2011 he worked at BBN, GE, Sarnoff and SAIC. His research interests include computer vision, machine learning and artificial intelligence.

Research Areas

Authored Publications

Sort By

A petavoxel fragment of human cerebral cortex reconstructed at nanoscale resolution

Alex Shapson-Coe

Daniel R. Berger

Yuelong Wu

Richard L. Schalek

Shuohong Wang

Neha Karlupia

Sven Dorkenwald

Evelina Sjostedt

Dongil Lee

Luke Bailey

Angerica Fitzmaurice

Rohin Kar

Benjamin Field

Hank Wu

Julian Wagner-Carena

David Aley

Joanna Lau

Zudi Lin

Donglai Wei

Hanspeter Pfister

Adi Peleg

Jeff W. Lichtman

Science (2024)

Preview abstract

To fully understand how the human brain works, knowledge of its structure at high resolution is needed. Presented here is a computationally intensive reconstruction of the ultrastructure of a cubic millimeter of human temporal cortex that was surgically removed to gain access to an underlying epileptic focus. It contains about 57,000 cells, about 230 millimeters of blood vessels, and about 150 million synapses and comprises 1.4 petabytes. Our analysis showed that glia outnumber neurons 2:1, oligodendrocytes were the most common cell, deep layer excitatory neurons could be classified on the basis of dendritic orientation, and among thousands of weak connections to each neuron, there exist rare powerful axonal inputs of up to 50 synapses. Further studies using this resource may bring valuable insights into the mysteries of the human brain.

View details

High-Precision Automated Reconstruction of Neurons with Flood-Filling Networks

Jörgen Kornfeld

Larry Lindsey

Winfried Denk

Nature Methods (2018)

Preview abstract

Reconstruction of neural circuits from volume electron microscopy data requires the tracing of cells in their entirety, including all their neurites. Automated approaches have been developed for tracing, but their error rates are too high to generate reliable circuit diagrams without extensive human proofreading. We present flood-filling networks, a method for automated segmentation that, similar to most previous efforts, uses convolutional neural networks, but contains in addition a recurrent pathway that allows the iterative optimization and extension of individual neuronal processes. We used flood-filling networks to trace neurons in a dataset obtained by serial block-face electron microscopy of a zebra finch brain. Using our method, we achieved a mean error-free neurite path length of 1.1 mm, and we observed only four mergers in a test set with a path length of 97 mm. The performance of flood-filling networks was an order of magnitude better than that of previous approaches applied to this dataset, although with substantially increased computational costs.

View details