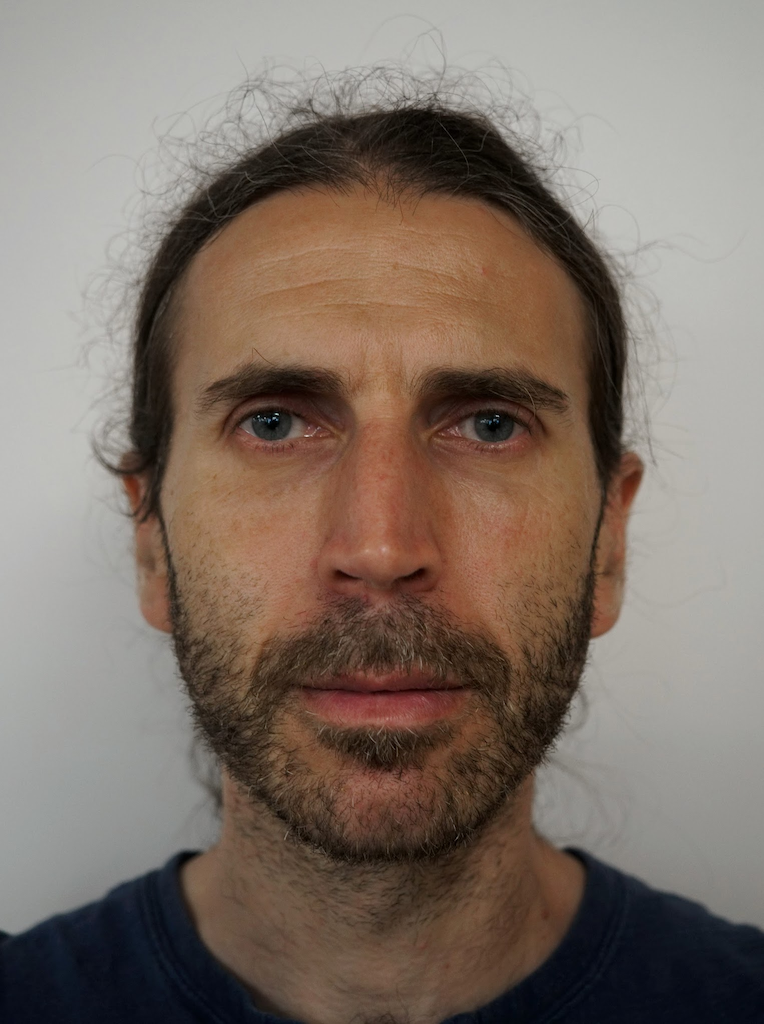

Alexander Gutkin

Research Areas

Authored Publications

Sort By

Google

Context-aware Transliteration of Romanized South Asian Languages

Christo Kirov

Computational Linguistics, 50 (2) (2024), 475–534

Helpful Neighbors: Leveraging Neighbors in Geographic Feature Pronunciation

Lion Jones

Richard William Sproat

Haruko Ishikawa

Transactions of the Association for Computational Linguistics, 11 (2023), 85–101

XTREME-UP: A User-Centric Scarce-Data Benchmark for Under-Represented Languages

Sebastian Ruder

Mihir Sanjay Kale

Shruti Rijhwani

Jean-Michel Sarr

Cindy Wang

John Wieting

Christo Kirov

Dana L. Dickinson

Bidisha Samanta

Connie Tao

David Adelani

Vera Axelrod

Reeve Ingle

Dmitry Panteleev

Findings of the Association for Computational Linguistics: EMNLP 2023, Association for Computational Linguistics, Singapore, pp. 1856-1884

Building Machine Translation Systems for the Next Thousand Languages

Julia Kreutzer

Aditya Siddhant

Mengmeng Niu

Pallavi Nikhil Baljekar

Xavier Garcia

Vera Saldinger Axelrod

Yuan Cao

Maxim Krikun

Pidong Wang

Apu Shah

Zhifeng Chen

Yonghui Wu

Macduff Richard Hughes

Google Research (2022)

Graphemic Normalization of the Perso-Arabic Script

Raiomond Doctor

Richard Sproat

Proceedings of Grapholinguistics in the 21st Century, 2022 (G21C, Grafematik), Fluxus Editions, Brest, France, pp. 315-376

Extensions to Brahmic script processing within the Nisaba library: new scripts, languages and utilities

Raiomond Doctor

Proceedings of the 13th Language Resources and Evaluation Conference.(LREC), European Language Resources Association (ELRA), 20-25 June, Marseille, France (2022), 6450‑6460

Mockingbird at the SIGTYP 2022 Shared Task: Two Types of Models for Prediction of Cognate Reflexes

Christo Kirov

Richard Sproat

Proceedings of the 4th Workshop on Research in Computational Typology and Multilingual NLP (SIGTYP 2022) at NAACL, Association for Computational Linguistics (ACL), Seattle, WA, pp. 70-79

Beyond Arabic: Software for Perso-Arabic Script Manipulation

Raiomond Doctor

Richard Sproat

Proceedings of the 7th Arabic Natural Language Processing Workshop (WANLP2022) at EMNLP, Association for Computational Linguistics (ACL), Abu Dhabi, United Arab Emirates (Hybrid), pp. 381-387

Criteria for Useful Automatic Romanization in South Asian Languages

Proceedings of the 13th Language Resources and Evaluation Conference.(LREC), European Language Resources Association (ELRA), 20-25 June, Marseille, France (2022), 6662‑6673

Design principles of an open-source language modeling microservice package for AAC text-entry applications

9th Workshop on Speech and Language Processing for Assistive Technologies (SLPAT-2022), Association for Computational Linguistics (ACL), Dublin, Ireland, pp. 1-16