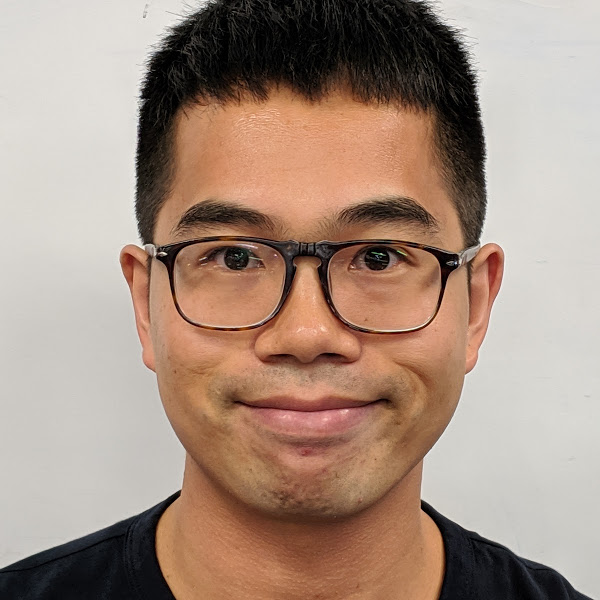

Lechao Xiao

Lechao is a research scientist in the Brain team at Google, where he is working on machine learning and deep learning. Prior to Google Brain, he was a Hans Rademacher Instructor of Mathematics at the University of Pennsylvania, where he was working on harmonic analysis. He earned his PhD in mathematics from the University of Illinois at Urbana-Champaign and his BA in pure and applied math from Zhejiang University, Hangzhou, China.

Lechao research interests include theory of machine learning and deep learning, optimization, Gaussian process, generalization, etc. He is particularly interested in research problems that have a good combination of theory and practice. He developed (with his collaborators) a mean field theory for convolutional neural networks. He developed several novel initialization methods (orthogonal convolutional kernel and delta orthogonal kernel) which allow practitioners to train neural networks with more than 10,000 layers without the use of any common techniques.

Research Areas

Authored Publications

Sort By

Fast Neural Kernel Embeddings for General Activations

Insu Han

Amir Zandieh

Jaehoon Lee

Roman Novak

Amin Karbasi

NeurIPS 2022 (2022) (to appear)

Preview abstract

Infinite width limit has shed light on generalization and optimization aspects of deep learning by establishing connections between neural networks and kernel methods. Despite their importance, the utility of these kernel methods was limited in large-scale learning settings due to their (super-)quadratic runtime and memory complexities. Moreover, most prior works on neural kernels have focused on the ReLU activation, mainly due to its popularity but also due to the difficulty of computing such kernels for general activations. In this work, we overcome such difficulties by providing methods to work with general activations. First, we compile and expand the list of activation functions admitting exact dual activation expressions to compute neural kernels. When the exact computation is unknown, we present methods to effectively approximate them. We propose a fast sketching method that approximates any multi-layered Neural Network Gaussian Process (NNGP) kernel and Neural Tangent Kernel (NTK) matrices for a wide range of activation functions, going beyond the commonly analyzed ReLU activation. This is done by showing how to approximate the neural kernels using the truncated Hermite expansion of any desired activation functions. While most prior works require data points on the unit sphere, our methods do not suffer from such limitations and are applicable to any dataset of points in ℝ^d. Furthermore, we provide a subspace embedding for NNGP and NTK matrices with near input-sparsity runtime and near-optimal target dimension which applies to any homogeneous dual activation functions with rapidly convergent Taylor expansion. Empirically, with respect to exact convolutional NTK (CNTK) computation, our method achieves 106× speedup for approximate CNTK of a 5-layer Myrtle network on CIFAR-10 dataset.

View details

Preview abstract

The effectiveness of machine learning algorithms arises from being able to extract useful features from large amounts of data. As model and dataset sizes increase, dataset distillation methods that compress large datasets into significantly smaller yet highly performant ones will become valuable in terms of training efficiency and useful feature extraction. To that end, we apply a novel distributed kernel based meta-learning framework to achieve state-of-the-art results for dataset distillation using infinitely wide convolutional neural networks. For instance, using only 10 datapoints (0.02% of original dataset), we obtain over 64% test accuracy on CIFAR-10 image classfication task, a dramatic improvement over the previous best test accuracy of 40%. Our state-of-the-art results extend across many other settings for MNIST, Fashion-MNIST, CIFAR-10, CIFAR-100, and SVHN. Furthermore, we perform some preliminary analyses of our distilled datasets to shed light on how they differ from naturally occurring data.

View details

Exploring the Uncertainty Properties of Neural Networks’ Implicit Priors in the Infinite-Width Limit

Ben Adlam

Jaehoon Lee

Jeffrey Pennington

International Conference on Learning Representations, 2021, International Conference on Learning Representations, 2021, 27 pages

Preview abstract

Modern deep learning models have achieved great success in predictive accuracy for many data modalities. However, their application to many real-world tasks is restricted by poor uncertainty estimates, such as overconfidence on out-of-distribution (OOD) data and ungraceful failing under distributional shift. Previous benchmarks have found that ensembles of neural networks (NNs) are typically the best calibrated models on OOD data. Inspired by this, we leverage recent theoretical advances that characterize the function-space prior of an infinitely-wide NN as a Gaussian process, termed the neural network Gaussian process (NNGP). We use the NNGP with a softmax link function to build a probabilistic model for multi-class classification and marginalize over the latent Gaussian outputs to sample from the posterior. This gives us a better understanding of the implicit prior NNs place on function space and allows a direct comparison of the calibration of the NNGP and its finite-width analogue. We also examine the calibration of previous approaches to classification with the NNGP, which treat classification problems as regression to the one-hot labels. In this case the Bayesian posterior is exact, and we compare several heuristics to generate a categorical distribution over classes. We find these methods are well calibrated under distributional shift. Finally, we consider an infinite-width final layer in conjunction with a pre-trained embedding. This replicates the important practical use case of transfer learning and allows scaling to significantly larger datasets. As well as achieving competitive predictive accuracy, this approach is better calibrated than its finite width analogue.

View details

Finite versus Infinite Neural Networks:an Empirical Study

Jaehoon Lee

Sam S. Schoenholz

Jeffrey Pennington

Ben Adlam

Roman Novak

Jascha Sohl-dickstein

NeurIPS 2020

Preview abstract

We perform a careful, thorough, and large scale empirical study of the correspondence between wide neural networks and kernel methods. By doing so, we resolve a variety of open questions related to the study of infinitely wide neural networks. Our experimental results include: kernel methods outperform fully connected finite width networks, but underperform convolutional finite width networks; neural network Gaussian process (NNGP) kernels frequently outperform neural tangent (NT) kernels; ensembles of finite networks have reduced posterior variance and behave similarly to infinite networks; weight decay and the use of a large learning rate break the correspondence of finite and infinite networks; the NTK parameterization outperforms the standard parameterization for finite width networks; finite network performance depends non-monotonically on width in ways not captured by double descent phenomena. Our experiments additionally motivate an improved layer-wise scaling for weight decay which improves generalization in finite-width networks. Finally, we develop improved best practices for using NNGP and NT kernels for prediction. Using these best practices we achieve state-of-the-art results for non-trainable kernels on CIFAR-10 classification tasks.

View details

Neural Tangents: Fast and Easy Infinite Neural Networks in Python

Roman Novak

Jiri Hron

Jaehoon Lee

Alex Alemi

Jascha Sohl-dickstein

Sam Schoenholz

ICLR (2020)

Preview abstract

Neural Tangents is a library designed to enable research into infinite-width neural networks. It provides a high-level API for specifying complex and hierarchical neural network architectures. These networks can then be trained and evaluated either at finite-width as usual or in their infinite-width limit. Infinite-width networks can be trained analytically using exact Bayesian inference or using gradient descent via the Neural Tangent Kernel. Additionally, Neural Tangents provides tools to study gradient descent training dynamics of wide but finite networks in either function space or weight space.

The entire library runs out-of-the-box on CPU, GPU, or TPU. All computations can be automatically distributed over multiple accelerators with near-linear scaling in the number of devices.

Neural Tangents is available at

https://github.com/google/neural-tangents

We also provide an accompanying interactive Colab notebook at

https://colab.sandbox.google.com/github/neural-tangents/neural-tangents/blob/master/notebooks/neural_tangents_cookbook.ipynb

View details

The Surprising Simplicity of the Early-Time Learning Dynamics of Neural Networks

Ben Adlam

Jeffrey Pennington

Wei Hu

Advances in Neural Information Processing Systems 34: Annual Conference on Neural Information Processing Systems 2020, NeurIPS 2020

Preview abstract

Modern neural networks are often regarded as complex black-box functions whose behavior is difficult to understand owing to their nonlinear dependence on the data and the nonconvexity in their loss landscapes. In this work, we show that these common perceptions can be completely false in the early phase of learning. In particular, we formally prove that, for a class of well-behaved input distributions, the early-time learning dynamics of a two-layer fully-connected neural network can be mimicked by training a simple linear model on the inputs. We additionally argue that this surprising simplicity can persist in networks with more layers and with convolutional architecture, which we verify empirically. Key to our analysis is to bound the spectral norm of the difference between the Neural Tangent Kernel (NTK) and an affine transform of the data kernel; however, unlike many previous results utilizing the NTK, we do not require the network to have disproportionately large width, and the network is allowed to escape the kernel regime later in training.

View details

Bayesian Deep Convolutional Networks with Many Channels are Gaussian Processes

Roman Novak

Jaehoon Lee

Greg Yang

Jiri Hron

Dan Abolafia

Jeffrey Pennington

Jascha Sohl-dickstein

ICLR (2019)

Preview abstract

There is a previously identified equivalence between wide fully connected neural

networks (FCNs) and Gaussian processes (GPs). This equivalence enables, for

instance, test set predictions that would have resulted from a fully Bayesian, infinitely wide trained FCN to be computed without ever instantiating the FCN, but

by instead evaluating the corresponding GP. In this work, we derive an analogous

equivalence for multi-layer convolutional neural networks (CNNs) both with and

without pooling layers, and achieve state of the art results on CIFAR10 for GPs

without trainable kernels. We also introduce a Monte Carlo method to estimate

the GP corresponding to a given neural network architecture, even in cases where

the analytic form has too many terms to be computationally feasible.

Surprisingly, in the absence of pooling layers, the GPs corresponding to CNNs

with and without weight sharing are identical. As a consequence, translation

equivariance, beneficial in finite channel CNNs trained with stochastic gradient

descent (SGD), is guaranteed to play no role in the Bayesian treatment of the infinite channel limit – a qualitative difference between the two regimes that is not

present in the FCN case. We confirm experimentally, that while in some scenarios

the performance of SGD-trained finite CNNs approaches that of the corresponding GPs as the channel count increases, with careful tuning SGD-trained CNNs

can significantly outperform their corresponding GPs, suggesting advantages from

SGD training compared to fully Bayesian parameter estimation.

View details

Wide Neural Networks of Any Depth Evolve as Linear Models Under Gradient Descent

Jaehoon Lee

Sam Schoenholz

Roman Novak

Jascha Sohl-dickstein

Jeffrey Pennington

NeurIPS (2019)

Preview abstract

A longstanding goal in deep learning research has been to precisely characterize training and generalization. However, the often complex loss landscapes of neural networks have made a theory of learning dynamics elusive. In this work, we show that for wide neural networks the learning dynamics simplify considerably and that, in the infinite width limit, they are governed by a linear model obtained from the first-order Taylor expansion of the network around its initial parameters. Furthermore, mirroring the correspondence between wide Bayesian neural networks and Gaussian processes, gradient-based training of wide neural networks with a squared loss produces test set predictions drawn from a Gaussian process with a particular compositional kernel. While these theoretical results are only exact in the infinite width limit, we nevertheless find excellent empirical agreement between the predictions of the original network and those of the linearized version even for finite practically-sized networks. This agreement is robust across different architectures, optimization methods, and loss functions.

View details

Preview abstract

In recent years, state-of-the-art methods in computer vision have utilized increasingly deep convolutional neural network architectures (CNNs), with some of the most successful models employing hundreds or even thousands of layers. A variety of pathologies such as vanishing/exploding gradients make training such deep networks challenging. While residual connections and batch normalization do enable training at these depths, it has remained unclear whether such specialized architecture designs are truly necessary to train deep CNNs. In this work, we demonstrate that it is possible to train vanilla CNNs with ten thousand layers or more simply by using an appropriate initialization scheme. We derive this initialization scheme theoretically by developing a mean field theory for signal propagation and by characterizing the conditions for dynamical isometry, the equilibration of singular values of the input-output Jacobian matrix. These conditions require that the convolution operator be an orthogonal transformation in the sense that it is norm-preserving. We present an algorithm for generating such random initial orthogonal convolution kernels and demonstrate empirically that they enable efficient training of extremely deep architectures.

View details

Preview abstract

In recent years, state-of-the-art methods in computer vision have utilized increasingly deep convolutional neural network architectures (CNNs), with some of the most successful models employing 1000 layers or more. Optimizing networks of such depth is extremely challenging and has up until now been possible only when the architectures incorporate special residual connections and batch normalization. In this work, we demonstrate that it is possible to train vanilla CNNs of depth 1500 or more simply by careful choice of initialization. We derive this initialization scheme theoretically, by developing a mean field theory for the dynamics of signal propagation in random CNNs with circular boundary conditions. We show that the order-to-chaos phase transition of such CNNs is similar to that of fully-connected networks, and we provide empirical evidence that ultra-deep vanilla CNNs are trainable if the weights and biases are initialized near the order-to-chaos transition.

View details