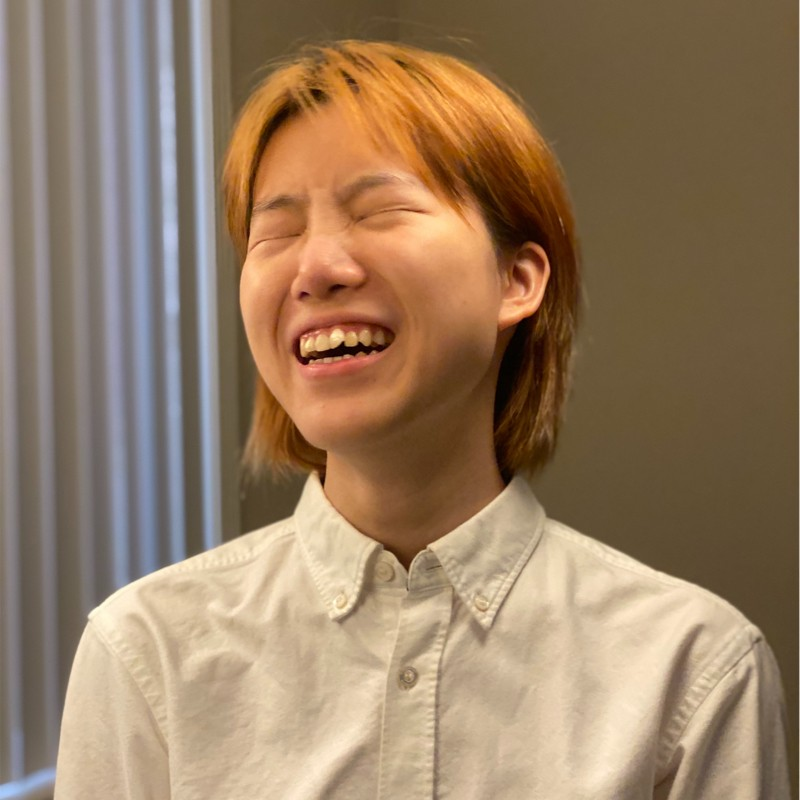

Yanhe Chen

Yanhe is is a Software Engineer at Google, dedicated to building innovative, user-centric products at the intersection of artificial intelligence and user experience.

Research Areas

Authored Publications

Sort By

Vibe Coding XR: Accelerating AI + XR Prototyping with XR Blocks and Gemini

Benjamin Hersh

Nels Numan

Jiahao Ren

Xingyue Chen

Robert Timothy Bettridge

Faraz Faruqi

Anthony 'Xiang' Chen

Steve Toh

Google XR, Google (2026)

Preview abstract

While large language models have accelerated software development through "vibe coding", prototyping intelligent Extended Reality (XR) experiences remains inaccessible due to the friction of complex game engines and low-level sensor integration. To bridge this gap, we contribute XR Blocks, an open-source, modular WebXR framework that abstracts spatial computing complexities into high-level, human-centered primitives. Building upon this foundation, we present Vibe Coding XR, an end-to-end rapid prototyping workflow that leverages LLMs to translate natural language intent directly into functional XR software. Using a web-based interface, creators can transform high-level prompts (e.g., "create a dandelion that reacts to hand") into interactive WebXR applications in under a minute. We provide a preliminary technical evaluation on a pilot dataset (VCXR60) alongside diverse application scenarios highlighting mixed-reality realism, multi-modal interaction, and generative AI integrations. By democratizing spatial software creation, this work empowers practitioners to bypass low-level hurdles and rapidly move from "idea to reality." Code and live demos are available at https://xrblocks.github.io/gem and https://github.com/google/xrblocks.

View details

XR Blocks: Accelerating Human-Centered AI + XR Innovation

Nels Numan

Evgenii Alekseev

Geonsun Lee

Alex Cooper

Min Xia

Scott Chung

Jeremy Nelson

Xiuxiu Yuan

Jolica Dias

Tim Bettridge

Benjamin Hersh

Michelle Huynh

Konrad Piascik

Ricardo Cabello

Google, XR, XR Labs (2025)

Preview abstract

We are on the cusp where Artificial Intelligence (AI) and Extended Reality (XR) are converging to unlock new paradigms of interactive computing. However, a significant gap exists between the ecosystems of these two fields: while AI research and development is accelerated by mature frameworks like PyTorch and benchmarks like LMArena, prototyping novel AI-driven XR interactions remains a high-friction process, often requiring practitioners to manually integrate disparate, low-level systems for perception, rendering, and interaction. To bridge this gap, we present XR Blocks, a cross-platform framework designed to accelerate human-centered AI + XR innovation. XR Blocks provides a modular architecture with plug-and-play components for core abstraction in AI + XR: user, world, peers; interface, context, and agents. Crucially, it is designed with the mission of "minimum code from idea to reality", accelerating rapid prototyping of complex AI + XR apps. Built upon accessible technologies (WebXR, three.js, TensorFlow, Gemini), our toolkit lowers the barrier to entry for XR creators. We demonstrate its utility through a set of open-source templates, samples, and advanced demos, empowering the community to quickly move from concept to interactive prototype.

View details

DialogLab: Authoring, Simulating, and Testing Dynamic Group Conversations in Hybrid Human-AI Conversations

Erzhen Hu

Mingyi Li

Alex Olwal

Seongkook Heo

Proceedings of the 38th Annual ACM Symposium on User Interface Software and Technology, ACM (2025), 210:1-20

Preview abstract

Designing compelling multi-party conversations involving both humans and AI agents presents significant challenges, particularly in balancing scripted structure with emergent, human-like interactions. We introduce DialogLab, a prototyping toolkit for authoring, simulating, and testing hybrid human-AI dialogues. DialogLab provides a unified interface to configure conversational scenes, define agent personas, manage group structures, specify turn-taking rules, and orchestrate transitions between scripted narratives and improvisation. Crucially, DialogLab allows designers to introduce controlled deviations from the script—through configurable agents that emulate human unpredictability—to systematically probe how conversations adapt and recover. DialogLab facilitates rapid iteration and evaluation of complex, dynamic multi-party human-AI dialogues. An evaluation with both end users and domain experts demonstrates that DialogLab supports efficient iteration and structured verification, with applications in training, rehearsal, and research on social dynamics. Our findings show the value of integrating real-time, human-in-the-loop improvisation with structured scripting to support more realistic and adaptable multi-party conversation design.

View details

Sensible Agent: A Framework for Unobtrusive Interaction with Proactive AR Agent

Geonsun Lee

Min Xia

Nels Numan

Dinesh Manocha

Proceedings of the 39th Annual ACM Symposium on User Interface Software and Technology (UIST), ACM (2025), pp. 22

Preview abstract

Proactive AR agents promise context-aware assistance, but their interactions often rely on explicit voice prompts or responses, which can be disruptive or socially awkward. We introduce Sensible Agent, a framework designed for unobtrusive interaction with these proactive agents. Sensible Agent dynamically adapts both “what” assistance to offer and, crucially, “how” to deliver it, based on real-time multimodal context sensing. Informed by an expert workshop (n=12) and a data annotation study (n=40), the framework leverages egocentric cameras, multimodal sensing, and Large Multimodal Models (LMMs) to infer context and suggest appropriate actions delivered via minimally intrusive interaction modes. We demonstrate our prototype on an XR headset through a user study (n=10) in both AR and VR scenarios. Results indicate that Sensible Agent significantly reduces perceived intrusiveness and interaction effort compared to voice-prompted baseline, while maintaining high utility.

View details

DialogLab: Authoring, Simulating, and Testing Dynamic Group Conversations in Hybrid Human-AI Conversations

Erzhen Hu

Mingyi Li

Alex Olwal

Seongkook Heo

UIST '25: Proceedings of the 38th Annual ACM Symposium on User Interface Software and Technology, ACM (2025), 210:1-20

Preview abstract

Designing compelling multi-party conversations involving both humans and AI agents presents significant challenges, particularly in balancing scripted structure with emergent, human-like interactions. We introduce DialogLab, a prototyping toolkit for authoring, simulating, and testing hybrid human-AI dialogues. DialogLab provides a unified interface to configure conversational scenes, define agent personas, manage group structures, specify turn-taking rules, and orchestrate transitions between scripted narratives and improvisation. Crucially, DialogLab allows designers to introduce controlled deviations from the script—through configurable agents that emulate human unpredictability—to systematically probe how conversations adapt and recover. DialogLab facilitates rapid iteration and evaluation of complex, dynamic multi-party human-AI dialogues. An evaluation with both end users and domain experts demonstrates that DialogLab supports efficient iteration and structured verification, with applications in training, rehearsal, and research on social dynamics. Our findings show the value of integrating real-time, human-in-the-loop improvisation with structured scripting to support more realistic and adaptable multi-party conversation design.

View details