Robust Neural Machine Translation

July 29, 2019

Posted by Yong Cheng, Software Engineer, Google Research

Quick links

In recent years, neural machine translation (NMT) using Transformer models has experienced tremendous success. Based on deep neural networks, NMT models are usually trained end-to-end on very large parallel corpora (input/output text pairs) in an entirely data-driven fashion and without the need to impose explicit rules of language.

Despite this huge success, NMT models can be sensitive to minor perturbations of the input, which can manifest as a variety of different errors, such as under-translation, over-translation or mistranslation. For example, given a German sentence, the state-of-the-art NMT model, Transformer, will yield a correct translation.

“Der Sprecher des Untersuchungsausschusses hat angekündigt, vor Gericht zu ziehen, falls sich die geladenen Zeugen weiterhin weigern sollten, eine Aussage zu machen.”

(Machine translation to English: “The spokesman of the Committee of Inquiry has announced that if the witnesses summoned continue to refuse to testify, he will be brought to court.”),

(Machine translation to English: “The spokesman of the Committee of Inquiry has announced that if the witnesses summoned continue to refuse to testify, he will be brought to court.”),

But, when we apply a subtle change to the input sentence, say from geladenen to the synonym vorgeladenen, the translation becomes very different (and in this case, incorrect):

“Der Sprecher des Untersuchungsausschusses hat angekündigt, vor Gericht zu ziehen, falls sich die vorgeladenen Zeugen weiterhin weigern sollten, eine Aussage zu machen.”

(Machine translation to English: “The investigative committee has announced that he will be brought to justice if the witnesses who have been invited continue to refuse to testify.”).

(Machine translation to English: “The investigative committee has announced that he will be brought to justice if the witnesses who have been invited continue to refuse to testify.”).

This lack of robustness in NMT models prevents many commercial systems from being applicable to tasks that cannot tolerate this level of instability. Therefore, learning robust translation models is not just desirable, but is often required in many scenarios. Yet, while the robustness of neural networks has been extensively studied in the computer vision community, only a few prior studies on learning robust NMT models can be found in literature.

In “Robust Neural Machine Translation with Doubly Adversarial Inputs” (to appear at ACL 2019), we propose an approach that uses generated adversarial examples to improve the stability of machine translation models against small perturbations in the input. We learn a robust NMT model to directly overcome adversarial examples generated with knowledge of the model and with the intent of distorting the model predictions. We show that this approach improves the performance of the NMT model on standard benchmarks.

Training a Model with AdvGen

An ideal NMT model would generate similar translations for separate inputs that exhibit small differences. The idea behind our approach is to perturb a translation model with adversarial inputs in the hope of improving the model’s robustness. It does this using an algorithm called Adversarial Generation (AdvGen), which generates plausible adversarial examples for perturbing the model and then feeds them back into the model for defensive training. While this method is inspired by the idea of generative adversarial networks (GANs), it does not rely on a discriminator network, but simply applies the adversarial example in training, effectively diversifying and extending the training set.

The first step is to perturb the model using AdvGen. We start by using Transformer to calculate the translation loss based on a source input sentence, a target input sentence and a target output sentence. Then AdvGen randomly selects some words in the source sentence, assuming a uniform distribution. Each word has an associated list of similar words, i.e., candidates that can be used for substitution, from which AdvGen selects the word that is most likely to introduce errors in Transformer output. Then, this generated adversarial sentence is fed back into Transformer, initiating the defense stage.

Model Performance

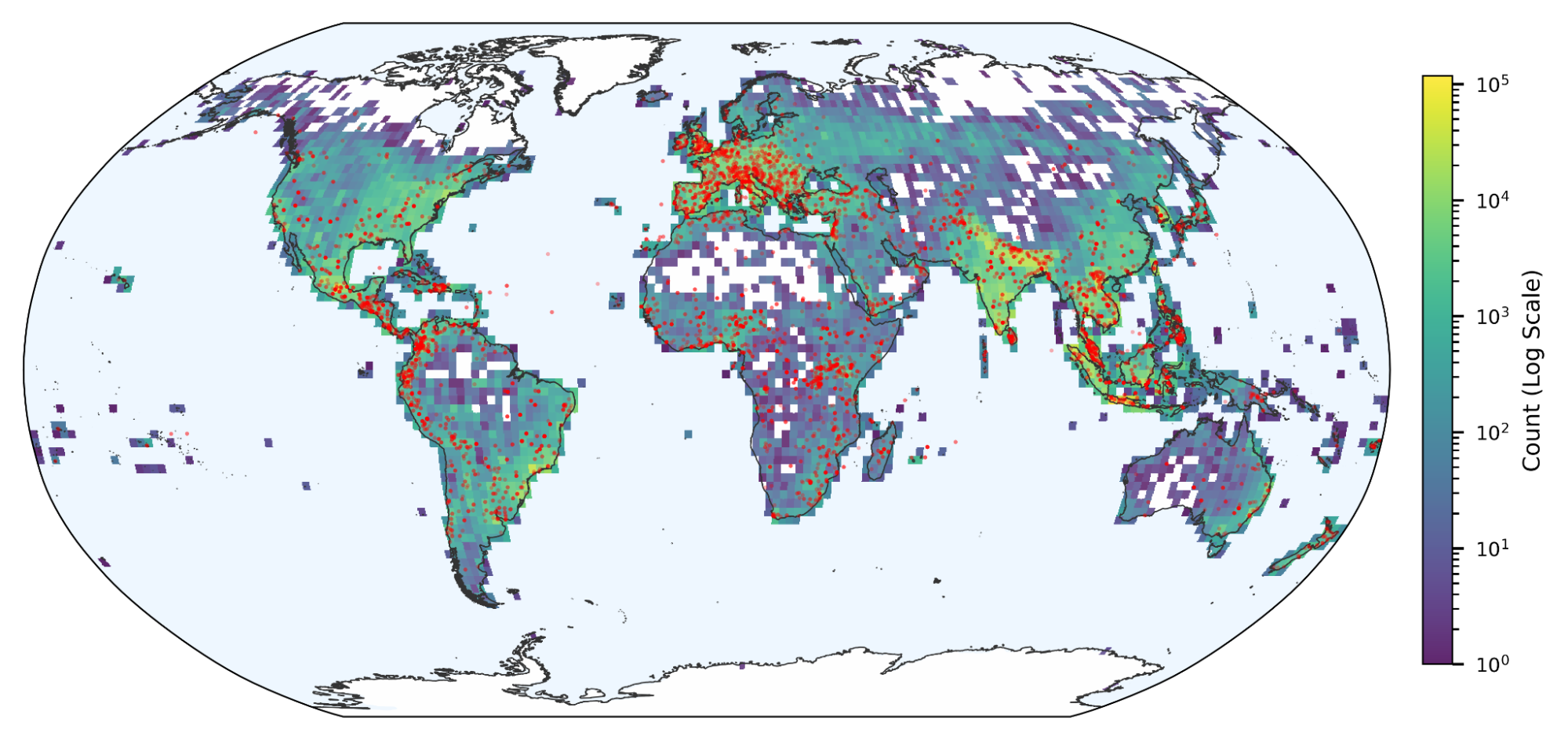

We demonstrate the effectiveness of our approach by applying it to the standard Chinese-English and English-German translation benchmarks. We observed a notable improvement of 2.8 and 1.6 BLEU points, respectively, compared to the competitive Transformer model, achieving a new state-of-the-art performance.

|

| Comparison of Transformer model (Vaswani et al., 2017) on standard benchmarks. |

|

| Comparison of Transformer, Miyao et al. and Cheng et al. on artificial noisy inputs. |

Acknowledgements

This research was conducted by Yong Cheng, Lu Jiang and Wolfgang Macherey. Additional thanks go to our leadership Andrew Moore and Julia (Wenli) Zhu.

Quick links

×

❮

❯