Introducing Groundsource: Turning news reports into data with Gemini

March 12, 2026

Oleg Zlydenko, Software Engineer, Rotem Mayo, Software Engineer, and Deborah Cohen, Research Scientist, Google Research

Today, we’re introducing Groundsource, a new scalable methodology that leverages Gemini to transform unstructured global news into actionable, historical data. Our first, open-access Groundsource dataset for urban flash floods comprises 2.6 million records, paving the way for more accurate, life-saving forecasts.

Quick links

Natural disasters pose a continuous threat to global populations and economies. Every year, they affect millions of people and cost billions of dollars in direct damages. To advance climate research, and ultimately provide communities with adequate warning about natural disasters so that they can stay safe, robust historical baselines are critical. Historical data enables scientists around the world to better mitigate hazards through hydrological modeling and validating forward-looking projections in empirical evidence. Historical records also inform practical applications from urban planning to insurance and emergency response.

That’s why, today, we are introducing Groundsource — a scalable framework for extracting verified ground truth from unstructured data, allowing us to map the historical footprint of disasters with unprecedented precision. We first used this methodology to create a unique global dataset for flash floods, comprising 2.6 million historical flood events spanning more than 150 countries. We are making this flash floods dataset openly available to provide a reliable source of high-quality data that can help with the modeling and prediction of flash floods in urban areas. The same methodology could also potentially be applied to build historical datasets for other hazards to accelerate global crisis resilience efforts.

The challenge: Global data scarcity

While some natural disasters, like seismic events, are tracked by unified global sensor networks, hydro-meteorological hazards like floods lack a standardized observation infrastructure. Accurate forecasting for flash floods has long been severely hampered by a lack of high-quality, global historical data for model training and validation. This “data desert” poses a critical challenge.

Existing archives, such as the satellite-based Global Flood Database (GFD) and the Dartmouth Flood Observatory (DFO), offer valuable inundation footprints but face physical limitations like cloud interference, satellite revisit times, and a tendency to capture only large, long-lasting disasters. The Global Disaster Alert and Coordination System (GDACS) — a joint United Nations and European Commission initiative monitoring humanitarian impact — provides essential data with an inventory of approximately 10,000 entries, but is primarily focused on high-impact events.

While 10,000 records may seem substantial, they represent a drop in the bucket compared to the data needed to train and verify global-scale AI. Data scarcity is particularly problematic for localized or quick-moving disasters, such as flash floods, because these events often go unrecorded in traditional hazard databases, creating predictive models that function reliably on a global scale is nearly impossible.

Groundsource: Turning news reports into data with Gemini

To address this global data scarcity, Groundsource curates flood details by analyzing available news reports, and transforms public information into a structured, localized event archive covering more than 150 countries and spanning from the year 2000 to the present. The core innovation of Groundsource lies in its ability to leverage advanced AI to extract signals from global news media.

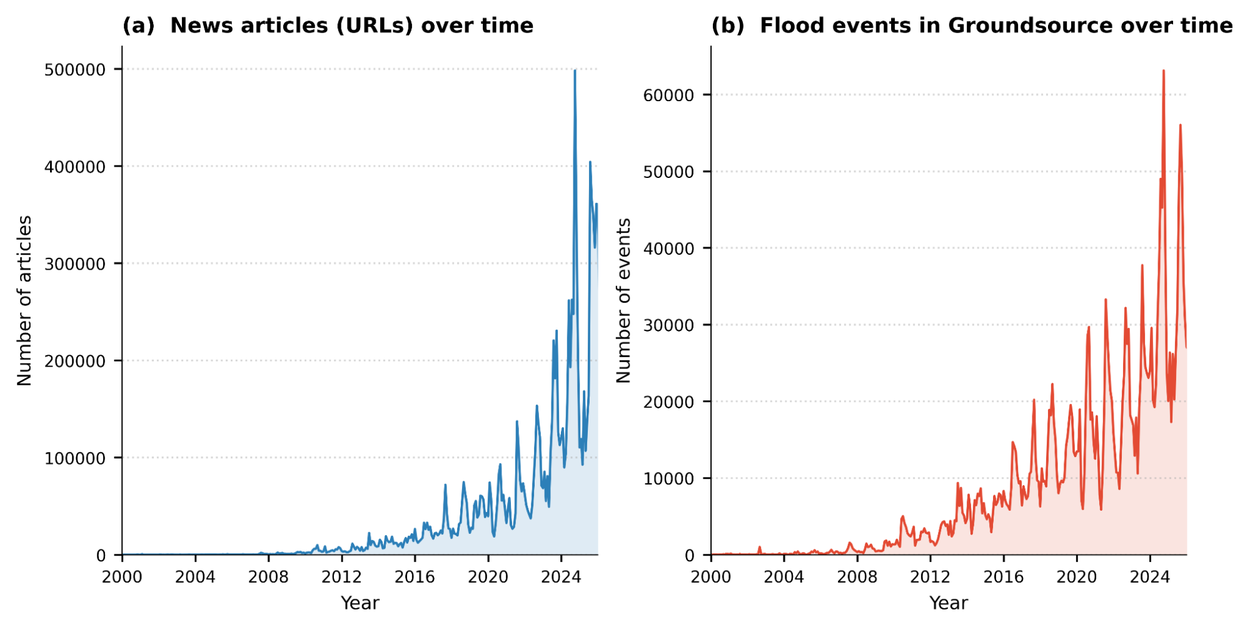

This chart illustrates the exponential growth of digitized news and the corresponding increase in flood events captured by the Groundsource pipeline, showing a significant density of data in recent years (2020–2025).

There is an abundant amount of unstructured data about historical events — news articles, government reports, and local bulletins — but extracting this information manually at scale is impossible. Our methodology analyzes news reports where flooding is a primary subject. We then use the Google Read Aloud user-agent to isolate primary text from 80 languages, which is standardized into English via the Cloud Translation API.

The most critical step of the extraction process is done using the Gemini Large Language Model (LLM). We engineered a sophisticated prompt that guides Gemini through a strict analytical verification process:

- Classification: The model distinguishes between reports of actual, ongoing, or past floods and articles that merely discuss future warnings, policy meetings, or general risk modeling.

- Temporal reasoning: Gemini anchors relative references (e.g., "last Tuesday") against an article's publication date to determine precise event timing.

- Spatial precision: The system identifies granular locations (neighborhoods and streets) and maps them to standardized spatial polygons using using Google Maps Platform.

The technical validation of Groundsource confirms its reliability for high-stakes research. In manual reviews, we found that 60% of extracted events were accurate in both location and timing. Crucially, 82% were accurate enough to be practically useful for real-world analysis — for example, by capturing the correct administrative district or pinpointing the event within a single day of its reported peak.

The coverage provided by Groundsource represents a massive-scale expansion over existing archives. By transforming unstructured media into data, we have generated 2.6 million events — a significant increase compared to the records found in traditional monitoring systems. Furthermore, spatiotemporal matching shows that Groundsource captured between 85% and 100% of the severe flood events recorded by GDACS between 2020 and 2026, a demonstration of its effectiveness in identifying high-impact disasters alongside smaller, localized events.

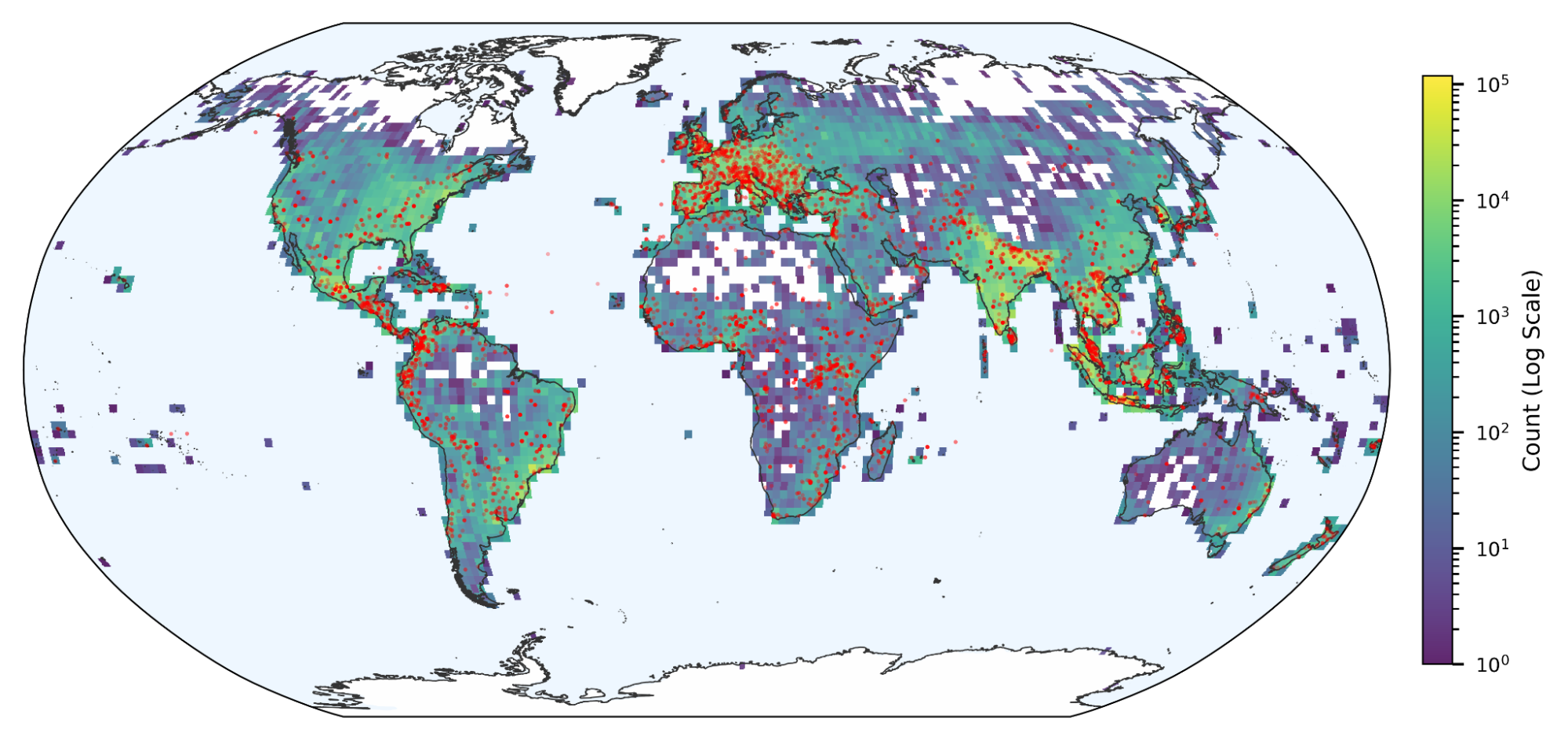

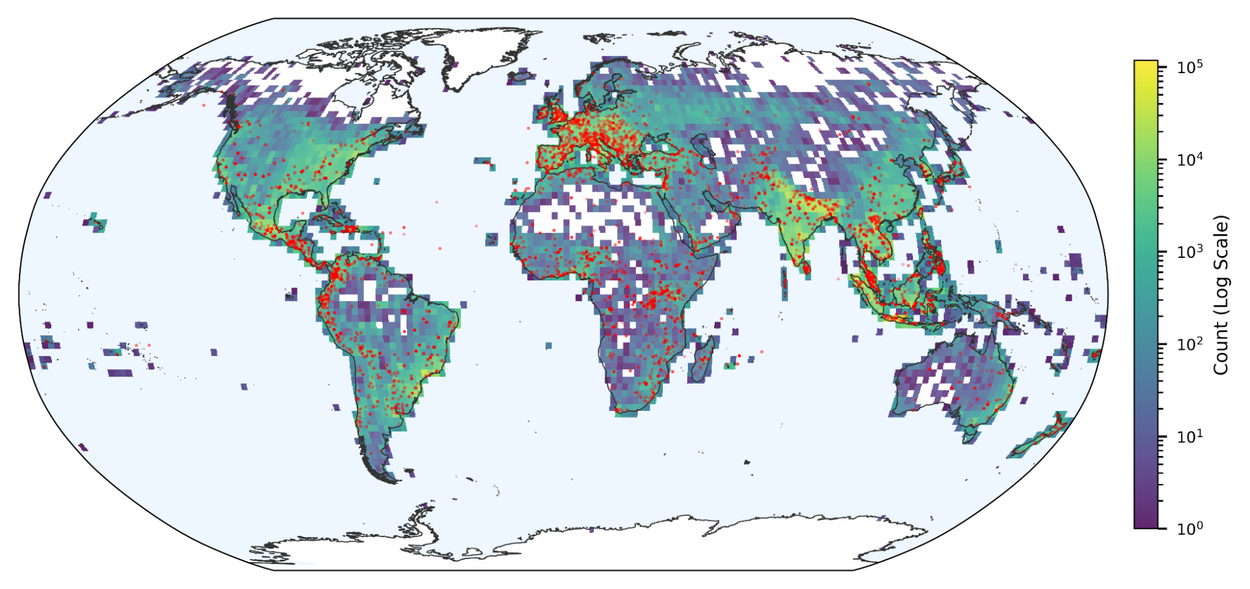

A global map showing the density of flood events in Groundsource. Red dots indicate floods from GDACS.

The impact: Enabling better forecasting for natural disasters

By utilizing this rich, structured data, we have achieved the ability to provide near-global urban flash flood forecasts up to 24 hours before the event. We’re now rolling out these forecasts in Google’s Flood Hub significantly broadening flood coverage for Google.

This work joins our Google Earth AI family of geospatial models and datasets, demonstrating scientific leadership in the crisis resilience space by showing that LLMs can systematically transform the world's "unstructured memory" — the news — into a robust scientific baseline. Moreover, this methodology has the potential to be applied to address data gaps for other natural hazards that lack precise historical records, such as droughts, landslides, and avalanches.

By turning the world’s news into actionable data, we aren't just documenting the past, we’re building a more resilient future. We are currently refining our model, working to expand our coverage to more rural areas, and integrating new data sources. Moving forward, we will apply this approach to other hazard types where a lack of ground-truth data has traditionally made crises impossible to predict, working towards a future where no community is surprised by a natural disaster.

Acknowledgements

Many people were involved in the development of this effort. We would like to especially thank: Amitay Sicherman, Avinatan Hassidim, Deborah Cohen, Frederik Kratzert, Gila Loike, Grey Nearing, Ido Zemach, Juliet Rothenberg, Moral Bootbool, Oleg Zlydenko, Oren Gilon, Reuven Sayag, Rotem Mayo, Shmuel Fronman, Yonatan Nakar, and Yossi Matias.