Improving the academic workflow: Introducing two AI agents for better figures and peer review

April 8, 2026

Jinsung Yoon, Research Scientist, and Tomas Pfister, Director, Google Cloud

Introducing two AI agents to streamline academic research. These include: PaperVizAgent, a visualizer agent for drawing academic figures, and ScholarPeer, a reviewer agent that automatically and rigorously evaluates academic papers.

Quick links

Academic research is evolving at an unprecedented pace driven by the rapid advancements in AI. The academic research workflow is notoriously rigorous, involving far more than just conceptualizing an idea and writing a paper. One hurdle many researchers face is how to effectively visualize their research. While AI can draft text, creating the complex methodology diagrams and precise statistical plots required for top-tier conferences and journals is significantly more difficult. Furthermore, the scientific community relies on the peer review process to maintain the integrity of published research. However, the exponential growth of paper submissions has severely strained this system, leading to reviewer fatigue and inconsistent evaluations. As language models and multi-agent systems become more sophisticated, we see their potential not just as subjects of study, but as active participants in the scientific process itself.

To that end, we introduce two novel agentic frameworks: (i) PaperVizAgent (formally known as PaperBanana), a visualizer agent for drawing academic figures, and (ii) ScholarPeer, a reviewer agent that automatically and rigorously evaluates academic papers, including inlined diagrams). These agents are designed specifically to assist with the academic research lifecycle to empower scientists to focus on innovation rather than administrative overhead. Our evaluations show PaperVizAgent consistently generates expert quality figures that significantly outperform leading baselines (GPT-Image-1.5, Nano-Banana-Pro, Paper2Any) while ScholarPeer delivers highly critical, literature-grounded reviews that beat state-of-the-art automated reviewers.

PaperVizAgent: Generating publication-ready figures

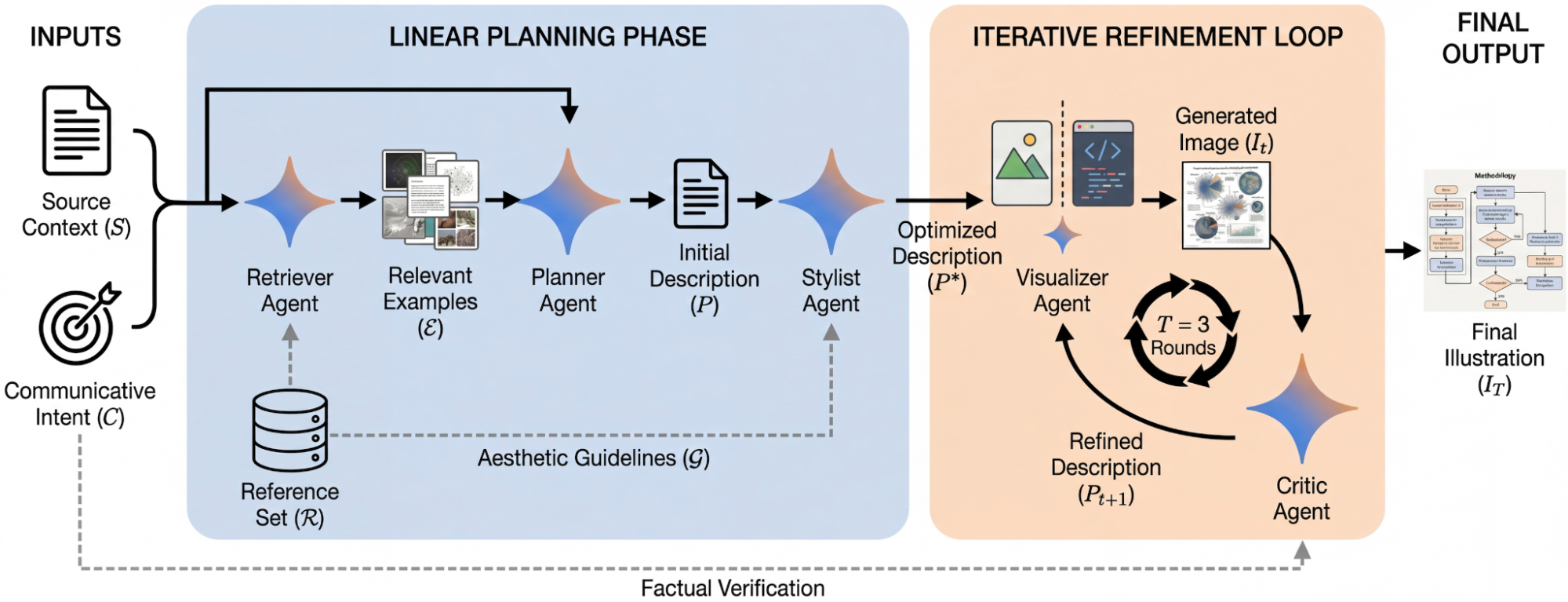

PaperVizAgent is an autonomous framework designed to generate publication-ready academic illustrations from academic text. By bridging the gap between technical descriptions and visual communication, PaperVizAgent allows researchers to create professional-grade figures directly from their manuscripts. To initiate the process, a researcher provides two inputs:

- Source context: Typically the method sections of a manuscript with technical details of the research.

- Communicative intent: A detailed figure caption that describes what the visual should convey.

The PaperVizAgent framework orchestrates a collaborative team of five specialized AI agents including: (1) a retriever, (2) a planner, (3) a stylist, (4) a visualizer, and (5) a critic. First, the retriever and planner agents gather references (e.g., existing literature to reference relevant academic figures) and organize the content. Next, the stylist agent synthesizes aesthetic guidelines to ensure the output matches academic standards. The visualizer then renders an image or generates executable python code for statistical plots. Finally, the critic agent evaluates the output against the original text. If inconsistencies are found, the critic provides targeted feedback to the visualizer agent, triggering a loop of iterative refinement.Through iterative refinement, this multi-agent system ensures the final illustration is both visually appealing and technically accurate.

Given the source context and communicative intent, PaperVizAgent retrieves relevant reference examples and synthesizes a stylistically optimized description. It then uses an iterative refinement loop to transform the description into the final illustration.

Examples of methodology diagrams generated by PaperVizAgent.

Results

In comprehensive experiments, PaperVisAgent consistently outperformed leading baselines — including direct prompting, few-shot prompting, and Paper2Any (a state-of-the-art approach for visualization). The system was rigorously evaluated using a comparative scoring metric (using a 0-100 scale, where a higher score is better) across four critical dimensions: faithfulness, conciseness, readability, and aesthetics. In this evaluation, we used an LLM judge that was calibrated using human-generated figures as inputs and a set human performance baseline of 50.0.

PaperVizAgent achieved an impressive overall score of 60.2, significantly surpassing all evaluated baselines such as GPT-Image-1.5, Nano-Banana-Pro, and Paper2Any. Notably, it stands as the only framework to exceed the established human baseline of 50.0 in its overall rating. When breaking down the specific dimensions, the system particularly excels in Conciseness and Aesthetics, scoring well above the human threshold in both categories. It also achieved human-competitive results in generating statistical plots, proving its versatility. These results represent a significant leap forward in automated illustration.

PaperVizAgent outperforms all baseline models across five key metrics, achieving results competitive with the human baseline.

Emulating senior reviewers with ScholarPeer

ScholarPeer is a context-aware, search-enabled multi-agent framework designed to automate and elevate the peer review process by following the workflow of a senior researcher.

Unlike standard language models that treat reviewing as a simple text-generation task, ScholarPeer relies on a dual-stream process of context acquisition and active verification. It dynamically constructs a domain narrative using a sub-domain historian agent which grounds the review in live, web-scale literature. A baseline scout acts as an adversarial auditor, specifically hunting for datasets or comparative baselines the authors may have missed. Finally, a multi-aspect Q&A engine rigorously verifies the paper's technical claims, ensuring a deep and fact-based critique. The final review report includes a detailed summary, strengths, weaknesses, and questions for the authors, much like a standard expert peer review.

Given an input paper, ScholarPeer employs a dual-stream information retrieval process. The context and knowledge module uses summarizer and search-enabled literature review to compress internal and external information. These inputs feed into the multi-aspect Q&A engine, which generates and answers probing questions regarding the novelty and technical soundness. Finally, the review generator utilizes these inputs and conference-specific review guidelines to generate the final review.

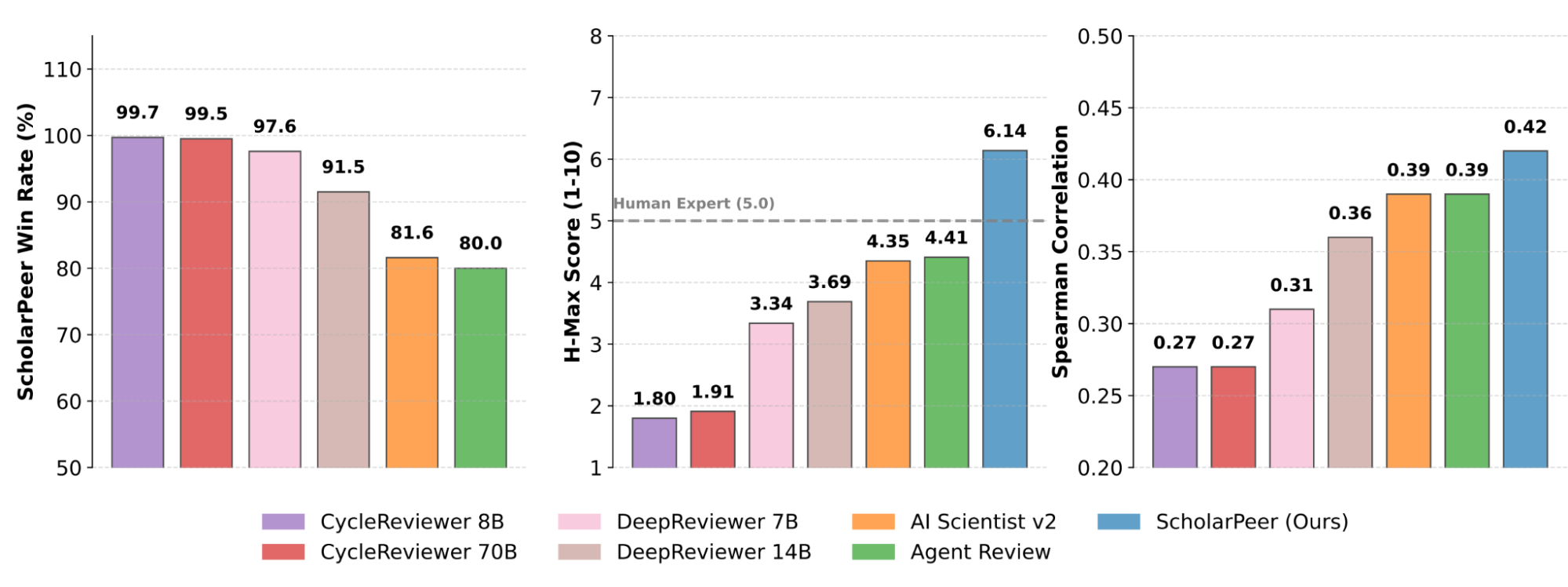

ScholarPeer's performance demonstrates the immense potential of integrating active web search with multi-agent orchestration for academic evaluation. When tested on the extensive public datasets, ScholarPeer achieved significant win-rates against state-of-the-art automated reviewing approaches in side-by-side evaluations. More importantly, the system's active verification workflow drastically reduced the gap between AI-generated feedback and human-level diversity, producing reviews that are highly critical, realistic, and deeply grounded in existing literature.

Comparative evaluation of ScholarPeer against existing frameworks on public datasets. Left: Win rate of ScholarPeer against review of fine-tuned models and agentic baselines. Middle: Average single-side score of reviews generated by various frameworks (best human review is considered as 5). Right: Correlation between human-expert evaluations and automated review framework rankings.

What’s next for the scientific community

PaperVizAgent and ScholarPeer are part of our broader efforts exploring AI-assisted research more generally. By tackling two distinct but equally demanding phases of the publication lifecycle, these tools serve as collaborators that elevate the quality of scientific discourse and can, alongside other tools, accelerate the dissemination of knowledge.

While these two frameworks offer immediate and tangible benefits to the academic community, they are just the beginning of our journey. We envision a future where researchers have access to a rich, interconnected ecosystem of AI assistants seamlessly integrated into every facet of the scientific workflow, and we are actively continuing our work in this space.

Acknowledgements

We would like to thank Palash Goyal, Dawei Zhu, Mihir Parmar, Rui Meng Yiwen Song, Yale Song, Hamid Palangi, Xiyu Wei, Sujian Li and Burak Gokturk for their valuable contributions to this work.

Disclaimer

PaperVizAgent and ScholarPeer are experimental research prototypes, not production-ready tools. Their automated feedback, figures, and reviews are intended only for research exploration and should not be relied upon as the definitive basis for editorial or publication decisions.