Estimating the Impact of Training Data with Reinforcement Learning

October 28, 2020

Posted by Jinsung Yoon and Sercan O. Arik, Research Scientists, Cloud AI Team, Google Research

Quick links

Recent work suggests that not all data samples are equally useful for training, particularly for deep neural networks (DNNs). Indeed, if a dataset contains low-quality or incorrectly labeled data, one can often improve performance by removing a significant portion of training samples. Moreover, in cases where there is a mismatch between the train and test datasets (e.g., due to difference in train and test location or time), one can also achieve higher performance by carefully restricting samples in the training set to those most relevant for the test scenario. Because of the ubiquity of these scenarios, accurately quantifying the values of training samples has great potential for improving model performance on real-world datasets.

|

|

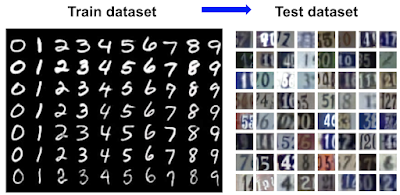

| Top: Examples of low-quality samples (noisy/crowd-sourced); Bottom: Examples of a train and test mismatch. |

In addition to improving model performance, assigning a quality value to individual data can also enable new use cases. It can be used to suggest better practices for data collection, e.g., what kinds of additional data would benefit the most, and can be used to construct large-scale training datasets more efficiently, e.g., by web searching using the labels as keywords and filtering out less valuable data.

In “Data Valuation Using Deep Reinforcement Learning”, accepted at ICML 2020, we address the challenge of quantifying the value of training data using a novel approach based on meta-learning. Our method integrates data valuation into the training procedure of a predictor model that learns to recognize samples that are more valuable for the given task, improving both predictor and data valuation performance. We have also launched four AI Hub Notebooks that exemplify the use cases of DVRL and are designed to be conveniently adapted to other tasks and datasets, such as domain adaptation, corrupted sample discovery and robust learning, transfer learning on image data and data valuation.

Quantifying the Value of Data

Not all data are equal for a given ML model — some have greater relevance for the task at hand or are more rich in informative content than others. So how does one evaluate the value of a single datum? At the granularity of a full dataset, it is straightforward; one can simply train a model on the entire dataset and use its performance on a test set as its value. However, estimating the value of a single datum is far more difficult, especially for complex models that rely on large-scale datasets, because it is computationally infeasible to re-train and re-evaluate a model on all possible subsets.

To tackle this, researchers have explored permutation-based methods (e.g., influence functions), and game theory-based methods (e.g., data Shapley). However, even the best current methods are far from being computationally feasible for large datasets and complex models, and their data valuation performance is limited. Concurrently, meta learning-based adaptive weight assignment approaches have been developed to estimate the weight values using a meta-objective. But rather than prioritizing learning from high value data samples, their data value mapping is typically based on gradient descent learning or other heuristic approaches that alter the conventional predictor model training dynamics, which can result in performance changes that are unrelated to the value of individual data points.

Data Valuation Using Reinforcement Learning (DVRL)

To infer the data values, we propose a data value estimator (DVE) that estimates data values and selects the most valuable samples to train the predictor model. This selection operation is fundamentally non-differentiable and thus conventional gradient descent-based methods cannot be used. Instead, we propose to use reinforcement learning (RL) such that the supervision of the DVE is based on a reward that quantifies the predictor performance on a small (but clean) validation set. The reward guides the optimization of the policy towards the action of optimal data valuation, given the state and input samples. Here, we treat the predictor model learning and evaluation framework as the environment, a novel application scenario of RL-assisted machine learning.

Results

We evaluate the data value estimation quality of DVRL on multiple types of datasets and use cases.

- Model performance after removing high/low value samples

Removing low value samples from the training dataset can improve the predictor model performance, especially in the cases where the training dataset contains corrupted samples. On the other hand, removing high value samples, especially if the dataset is small, decreases the performance significantly. Overall, the performance after removing high/low value samples is a strong indicator for the quality of data valuation. Leave-One-Out and Data Shapley).

- Robust learning with noisy labels

We consider how reliably DVRL can learn with noisy data in an end-to-end way, without removing the low-value samples. Ideally, noisy samples should get low data values as DVRL converges and a high performance model would be returned.

We show state-of-the-art results with DVRL in minimizing the impact of noisy labels. These also demonstrate that DVRL can scale to complex models and large-scale datasets.

Robust learning with noisy labels. Test accuracy for ResNet-32 and WideResNet-28-10 on CIFAR-10 and CIFAR-100 datasets with 40% of uniform random noise on labels. DVRL outperforms other popular methods that are based on meta-learning.

-

Domain adaptation

We consider the scenario where the training dataset comes from a substantially different distribution from the validation and testing datasets. Data valuation is expected to be beneficial for this task by selecting the samples from the training dataset that best match the distribution of the validation dataset. We focus on the three cases: (1) a training set based on image search results (low-quality web-scraped) applied to the task of predicting skin lesion classification using HAM 10000 data (high-quality medical); (2) an MNIST training set for a digit recognition task on USPS data (different visual domain); (3) e-mail spam data to detect spam applied to an SMS dataset (different task). DVRL yields significant improvements for domain adaptation, by jointly optimizing the data valuator and corresponding predictor model.

Conclusions

We propose a novel meta learning framework for data valuation which determines how likely each training sample will be used in training of the predictor model. Unlike previous works, our method integrates data valuation into the training procedure of the predictor model, allowing the predictor and DVE to improve each other's performance. We model this data value estimation task using a DNN trained through RL with a reward obtained from a small validation set that represents the target task performance. In a computationally-efficient way, DVRL can provide high quality ranking of training data that is useful for domain adaptation, corrupted sample discovery and robust learning. We show that DVRL significantly outperforms alternative methods on diverse types of tasks and datasets.

Acknowledgements

We gratefully acknowledge the contributions of Tomas Pfister.