Co-training Transformer with Videos and Images Improves Action Recognition

March 1, 2022

Posted by Bowen Zhang, Student Researcher and Jiahui Yu, Senior Research Scientist, Google Research, Brain Team

Quick links

Action recognition has become a major focus area for the research community because many applications can benefit from improved modeling, such as video retrieval, video captioning, video question-answering, etc. Transformer-based approaches have recently demonstrated state-of-the-art performance on several benchmarks. While Transformer models require data to learn better visual priors compared to ConvNets, action recognition datasets are relatively small in scale. Large Transformer models are typically first trained on image datasets and later fine-tuned on a target action recognition dataset.

While the current pre-training and fine-tuning action recognition paradigm is straightforward and manifests strong empirical results, it may be overly restrictive for building general-purpose action-recognition models. Compared to a dataset like ImageNet that covers a large range of object recognition classes, action recognition datasets like Kinetics and Something-Something-v2 (SSv2) pertain to limited topics. For example, Kinetics include object-centric actions like “cliff diving” and “ice climbing’ while SSv2 contains object-agnostic activities like ’pretending to put something onto something else.’ As a result, we observed poor performance adapting an action recognition model that has been fine-tuned on one dataset to another disparate dataset.

Differences in objects and video backgrounds among datasets further exacerbate learning a general-purpose action recognition classification model. Despite the fact that video datasets may be increasing in size, prior work suggests significant data augmentation and regularization is necessary to achieve strong performance. This latter finding may indicate the model quickly overfits on the target dataset, and as a result, hinders its capacity to generalize to other action recognition tasks.

In “Co-training Transformer with Videos and Images Improves Action Recognition”, we propose a training strategy, named CoVeR, that leverages both image and video data to jointly learn a single general-purpose action recognition model. Our approach is buttressed by two main findings. First, disparate video datasets cover a diverse set of activities, and training them together in a single model could lead to a model that excels at a wide range of activities. Second, video is a perfect source for learning motion information, while images are great for exploiting structural appearance. Leveraging a diverse distribution of image examples may be beneficial in building robust spatial representations in video models. Concretely, CoVeR first pre-trains the model on an image dataset, and during fine-tuning, it simultaneously trains a single model on multiple video and image datasets to build robust spatial and temporal representations for a general-purpose video understanding model.

Architecture and Training Strategy

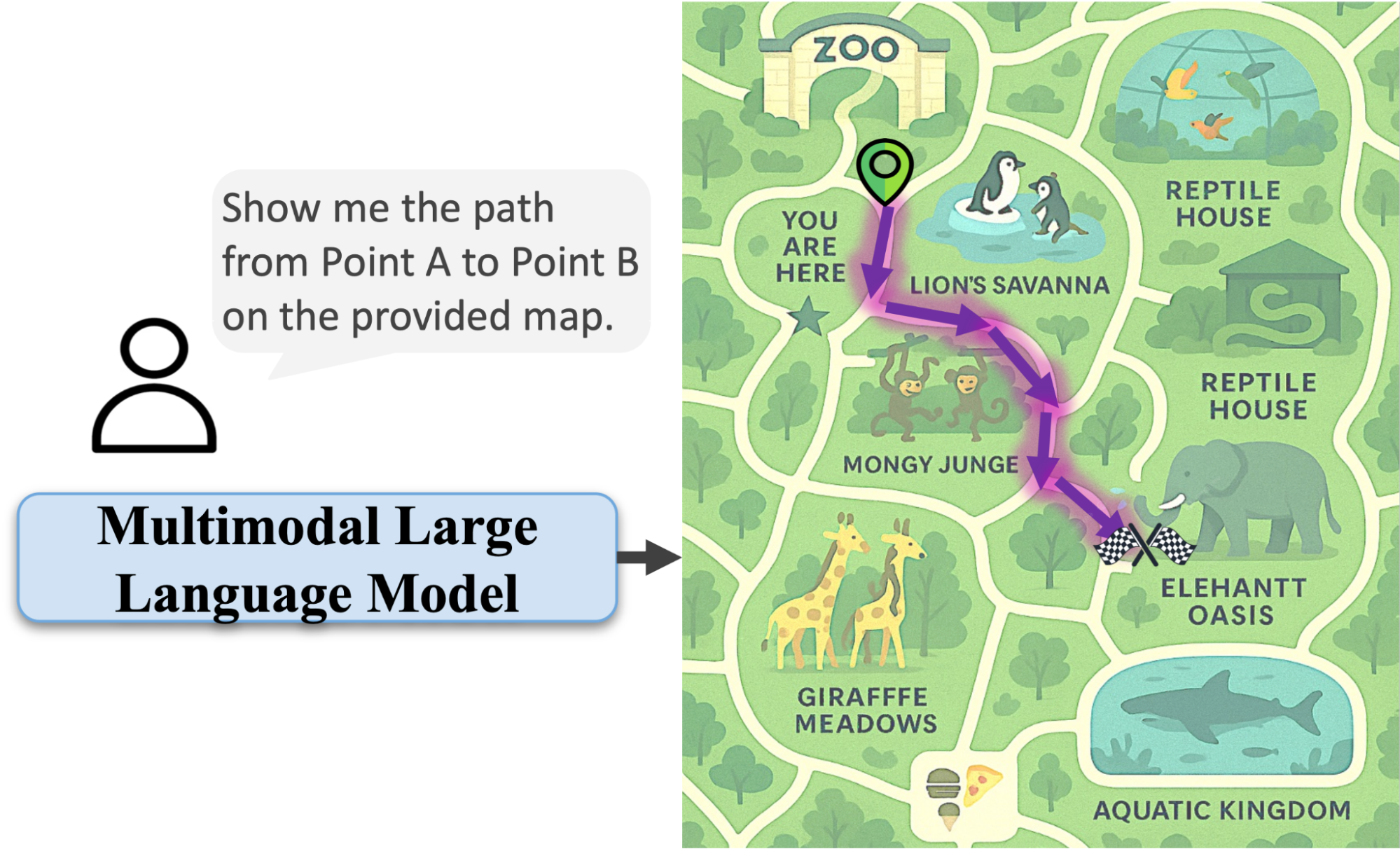

We applied the CoVeR approach to the recently proposed spatial-temporal video transformer, called TimeSFormer, that contains 24 layers of transformer blocks. Each block contains one temporal attention, one spatial attention, and one multilayer perceptron (MLP) layer. To learn from multiple video and image datasets, we adopt a multi-task learning paradigm and equip the action recognition model with multiple classification heads. We pre-train all non-temporal parameters on the large-scale JFT dataset. During fine-tuning, a batch of videos and images are sampled from multiple video and image datasets. The sampling rate is proportional to the size of the datasets. Each sample within the batch is processed by the TimeSFormer and then distributed to the corresponding classifier to get the predictions.

Compared with the standard training strategy, CoVeR has two advantages. First, as the model is directly trained on multiple datasets, the learned video representations are more general and can be directly evaluated on those datasets without additional fine-tuning. Second, Transformer-based models may easily overfit to a smaller video distribution, thus degrading the generalization of the learned representations. Training on multiple datasets mitigates this challenge by reducing the risk of overfitting.

|

| CoVeR adopts a multi-task learning strategy trained on multiple datasets, each with their own classifier. |

Benchmark Results

We evaluate the CoVeR approach to train on Kinetics-400 (K400), Kinetics-600 (K600), Kinetics-700 (K700), SomethingSomething-V2 (SSv2), and Moments-in-Time (MiT) datasets. Compared with other approaches — such as TimeSFormer, Video SwinTransformer, TokenLearner, ViViT, MoViNet, VATT, VidTr, and OmniSource — CoVeR established the new state-of-the-art on multiple datasets (shown below). Unlike previous approaches that train a dedicated model for one single dataset, a model trained by CoVeR can be directly applied to multiple datasets without further fine-tuning.

| Model | Pretrain | Finetune | K400 Accuracy |

| VATT | AudioSet+Videos | K400 | 82.1 |

| Omnisource | IG-Kinetics-65M | K400 | 83.6 |

| ViViT | JFT-300M | K400 | 85.4 |

| Video SwinTrans | ImageNet21K+external | K400 | 86.8 |

| CoVeR | JFT-3B | K400+SSv2+MiT+ImNet | 87.2 |

| Accuracy comparison on Kinetics-400 (K400) dataset. |

| Model | Pretrain | Finetune | SSv2 Accuracy |

| TimeSFormer | ImageNet21k | SSv2 | 62.4 |

| VidTr | ImageNet21k | SSv2 | 63.0 |

| ViViT | ImageNet21k | SSv2 | 65.9 |

| Video SwinTrans | ImageNet21K+external | SSv2 | 69.6 |

| CoVeR | JFT-3B | K400+SSv2+MiT+ImNet | 70.9 |

| Accuracy comparison on SomethingSomething-V2 (SSv2) dataset. |

| Model | Pretrain | Finetune | MiT Accuracy |

| ViViT | ImageNet21k | MiT | 38.5 |

| VidTr | ImageNet21k | SSv2 | 41.1 |

| CoVeR | JFT-3B | K400+SSv2+MiT+ImNet | 46.1 |

| Accuracy comparison on Moments-in-Time (MiT) dataset. |

Transfer Learning

We use transfer learning to further verify the video action recognition performance and compare with co-training on multiple datasets, results are summarized below. Specifically, we train on the source datasets, then fine-tune and evaluate on the target dataset.

We first consider K400 as the target dataset. CoVeR co-trained on SSv2 and MiT improves the top-1 accuracy on K400→K400 (where the model is trained on K400 and then fine-tuned on K400) by 1.3%, SSv2→K400 by 1.7%, and MiT→K400 by 0.4%. Similarly, we observe that by transferring to SSv2, CoVeR achieves 2%, 1.8%, and 1.1% improvement over SSv2→SSv2, K400→SSv2, and MiT→SSv2, respectively. The 1.2% and 2% performance improvement on K400 and SSv2 indicates that CoVeR co-trained on multiple datasets could learn better visual representations than the standard training paradigm, which is useful for downstream tasks.

|

| Comparison of transfer learning the representation learned by CoVeR and standard training paradigm. A→B means the model is trained on dataset A and then fine-tuned on dataset B. |

Conclusion

In this work, we present CoVeR, a training paradigm that jointly learns action recognition and object recognition tasks in a single model for the purpose of constructing a general-purpose action recognition framework. Our analysis indicates that it may be beneficial to integrate many video datasets into one multi-task learning paradigm. We highlight the importance of continuing to learn on image data during fine-tuning to maintain robust spatial representations. Our empirical findings suggest CoVeR can learn a single general-purpose video understanding model which achieves impressive performance across many action recognition datasets without an additional stage of fine-tuning on each downstream application.

Acknowledgements

We would like to thank Christopher Fifty, Wei Han, Andrew M. Dai, Ruoming Pang, and Fei Sha for preparation of the CoVeR paper, Yue Zhao, Hexiang Hu, Zirui Wang, Zitian Chen, Qingqing Huang, Claire Cui and Yonghui Wu for helpful discussions and feedbacks, and others on the Brain Team for support throughout this project.

-

Labels:

- Machine Perception