Beyond Short Snippets: Deep Networks for Video Classification

April 8, 2015

Posted by Software Engineers George Toderici and Sudheendra Vijayanarasimhan

Quick links

Convolutional Neural Networks (CNNs) have recently shown rapid progress in advancing the state of the art of detecting and classifying objects in static images, automatically learning complex features in pictures without the need for manually annotated features. But what if one wanted not only to identify objects in static images, but also analyze what a video is about? After all, a video isn’t much more than a string of static images linked together in time.

As it turns out, video analysis provides even more information to the object detection and recognition task performed by CNN’s by adding a temporal component through which motion and other information can be also be used to improve classification. However, analyzing entire videos is challenging from a modeling perspective because one must model variable length videos with a fixed number of parameters. Not to mention that modeling variable length videos is computationally very intensive.

In Beyond Short Snippets: Deep Networks for Video Classification, to be presented at the 2015 Computer Vision and Pattern Recognition conference (CVPR 2015), we1 evaluated two approaches - feature pooling networks and recurrent neural networks (RNNs) - capable of modeling variable length videos with a fixed number of parameters while maintaining a low computational footprint. In doing so, we were able to not only show that learning a high level global description of the video’s temporal evolution is very important for accurate video classification, but that our best networks exhibited significant performance improvements over previously published results on the Sports 1 million dataset (Sports-1M).

In previous work, we employed 3D-convolutions (meaning convolutions over time and space) over short video clips - typically just a few seconds - to learn motion features from raw frames implicitly and then aggregate predictions at the video level. For purposes of video classification, the low level motion features were only marginally outperforming models in which no motion was modeled.

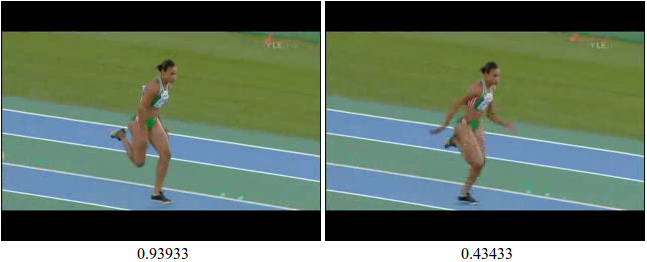

To understand why, consider the following two images which are very similar visually but obtain drastically different scores from a CNN model trained on static images:

|

| Slight differences in object poses/context can change the predicted class/confidence of CNNs trained on static images. |

To get around this frame-by-frame confusion, we used feature pooling networks that independently process each frame and then pool/aggregate the frame-level features over the entire video at various stages. Another approach we took was to utilize an RNN (derived from Long Short Term Memory units) instead of feature pooling, allowing the network itself to decide which parts of the video are important for classification. By sharing parameters through time, both feature pooling and RNN architectures are able to maintain a constant number of parameters while capturing a global description of the video’s temporal evolution.

In order to feed the two aggregation approaches, we compute an image “pixel-based” CNN model, based on the raw pixels in the frames of a video. We processed videos for the “pixel-based” CNNs at one frame per second to reduce computational complexity. Of course, at this frame rate implicit motion information is lost.

To compensate, we incorporate explicit motion information in the form of optical flow - the apparent motion of objects across a camera's viewfinder due to the motion of the objects or the motion of the camera. We compute optical flow images over adjacent frames to learn an additional “optical flow” CNN model.

|

| Left: Image used for the pixel-based CNN; Right: Dense optical flow image used for optical flow CNN |

short video showing some example outputs from the deep convolutional networks presented in our paper.

1 Research carried out in collaboration with University of Maryland, College Park PhD student Joe Yue-Hei Ng and University of Texas at Austin PhD student Matthew Hausknecht, as part of a Google Software Engineering Internship↩

Quick links

×

❮

❯