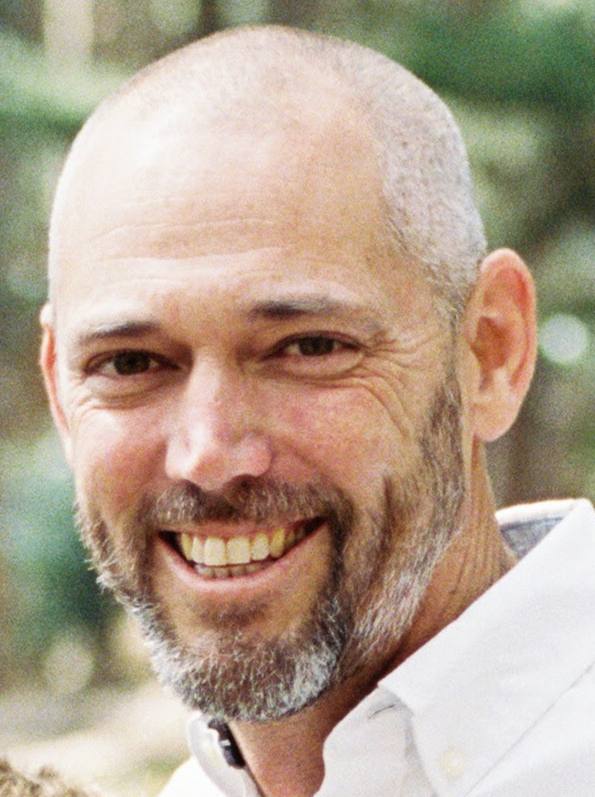

Collin Green

Collin is the User Experience Research lead and manager of the Engineering Productivity Research team within Developer Intelligence. The Engineering Productivity Research team brings a data-driven approach to business decisions around engineering productivity. They use a combination of qualitative and quantitative methods to triangulate on measuring productivity. Collin received his Ph.D. in Cognitive Psychology from the University of California-Los Angeles.

Research Areas

Authored Publications

Sort By

Preview abstract

In a prior column, we wrote about how measuring productivity can be viewed as a form of modeling and that all models are wrong, but some are useful. That discussion centered on the idea of ensuring that a productivity model was inclusive of multiple metrics and that those metrics covered the various facets of productivity and covered each facet reasonably well. In that article, we set aside the question of what makes a good individual productivity metric that can be combined with others into a (hopefully) useful model of productivity. In this article, we’ll share some things we consider when building an individual metric, including an example of a novel metric we built in the aftermath of the COVID pandemic.

View details

Preview abstract

Here’s a thought experiment. Say I wave a magic wand across a codebase and an entire class of technical debt, poof, goes away and immediately evaporates if introduced in the future. For example, maybe I make it so that dead feature flags are simply no longer a problem: they just delete themselves as soon as the engineer wills it. Or maybe large-scale migrations just migrate themselves. Maybe we magically have 100% test coverage, without an engineer lifting a finger.

What will happen to developer productivity?

Surely, developer productivity increases overall.

But will the productivity metrics that we all use as a proxy for “developer productivity” move up and to the right. Let’s explore this idea.

View details

Preview abstract

Measuring productivity is equivalent to building a model. All models are wrong, but some are useful. Productivity models are often “worryingly selective” (wrong because of omissions). Worrying selectivity can be combated by taking a holistic approach that includes multiple measurements of multiple outcomes. Productivity models should include multiple outcomes, metrics, and methods.

View details

Preview abstract

Measuring productivity is equivalent to building a model. All models are wrong, but some are useful. Productivity models are often “worryingly selective” (wrong because of omissions). Worrying selectivity can be combated by taking a holistic approach that includes multiple measurements of multiple outcomes. Productivity models should include multiple outcomes, metrics, and methods.

View details

Preview abstract

Creativity in software development is frequently overlooked, specifically

in the design of developer tools which often focus on productivity. This is likely

because creativity is not always seen as a goal in software engineering; in part,

this can be explained by the unique way in which software engineers relate to

creativity as centered around reusability rather than novelty. However, creativity is

a critical aspect of software engineering, and importantly, there is a clear

possibility for AI to impact creativity in both positive or negative ways. In this

article, we explore the differences in goals for designing AI tools for productivity

compared to creativity and propose strategies to elevate creativity in the software

engineering workflow. Specifically, we apply seamful design to AI powered

software development to consider the role of seamfulness in software

development workflows as a way to support creativity.

View details

Preview abstract

AI-powered software development tooling is changing the way that developers interact with tools and write code. However, the ability for AI to truly transform software development depends on developers' level of trust in the tools. In this work, we take a mixed methods approach to measuring the factors that influence developers' trust in AI-powered code completion. We identified that familiarity with AI suggestions, quality of the suggestion, and level of expertise with the language all increased acceptance rate of AI-powered suggestions. While suggestion length and presence in a test file decreased acceptance rates. Based on these findings we propose recommendations for the design of AI-powered development tools to improve trust.

View details

Preview abstract

This is the seventh installment of the Developer Productivity for Humans column. This installment focuses on software quality: what it means, how developers see it, how we break it down into 4 types of quality, and the impact these have on each other.

View details

Measuring Developer Experience with a Longitudinal Survey

Jessica Lin

Jill Dicker

IEEE Software (2024)

Preview abstract

At Google, we’ve been running a quarterly large-scale survey with developers since 2018. In this article, we will discuss how we run EngSat, some of our key learnings over the past 6 years, and how we’ve evolved our approach to meet new needs and challenges.

View details

Systemic Gender Inequities in Who Reviews Code

Emerson Murphy-Hill

Jill Dicker

Amber Horvath

Laurie R. Weingart

Nina Chen

Computer Supported Cooperative Work (2023) (to appear)

Preview abstract

Code review is an essential task for modern software engineers, where the author of a code change assigns other engineers the task of providing feedback on the author’s code. In this paper, we investigate the task of code review through the lens of equity, the proposition that engineers should share reviewing responsibilities fairly. Through this lens, we quantitatively examine gender inequities in code review load at Google. We found that, on average, women perform about 25% fewer reviews than men, an inequity with multiple systemic antecedents, including authors’ tendency to choose men as reviewers, a recommender system’s amplification of human biases, and gender differences in how reviewer credentials are assigned and earned. Although substantial work remains to close the review load gap, we show how one small change has begun to do so.

View details

Developer Productivity for Humans, Part 5: Onboarding and Ramp-Up

Nan Zhang

Lanting He

Heng Liu

Demei Shen

IEEE Software Magazine (2023)

Preview abstract

In this installment of our column, we’ll describe some recent research on onboarding software developers, including some of the work that we’ve done with colleagues at Google to understand and measure developer onboarding and ramp-up at Google.

View details