Google Brain Residency Program - 7 months in and looking ahead

January 5, 2017

Posted by Jeff Dean, Google Senior Fellow and Leslie Phillips, Google Brain Residency Program Manager

Quick links

“Beyond being incredibly instructive, the Google Brain Residency program has been a truly affirming experience. Working alongside people who truly love what they do--and are eager to help you develop your own passion--has vastly increased my confidence in my interests, my ability to explore them, and my plans for the near future.”

-Akosua Busia, B.S. Mathematical and Computational Science, Stanford University ‘16

2016 Google Brain Resident

In October 2015 we launched the Google Brain Residency, a 12-month program focused on jumpstarting a career for those interested in machine learning and deep learning research. This program is an opportunity to get hands on experience using the state-of-the-art infrastructure available at Google, and offers the chance to work alongside top researchers within the Google Brain team.

Our first group of residents arrived in June 2016, working with researchers on problems at the forefront of machine learning. The wide array of topics studied by residents reflects the diversity of the residents themselves — some come to the program as new graduates with degrees ranging from BAs to Ph.Ds in computer science to physics and mathematics to biology and neuroscience, while other residents come with years of industry experience under their belts. They all have come with a passion for learning how to conduct machine learning research.

The breadth of research being done by the Google Brain Team along with resident-mentorship pairing flexibility ensures that residents with interests in machine learning algorithms and reinforcement learning, natural language understanding, robotics, neuroscience, genetics and more, are able to find good mentors to help them pursue their ideas and publish interesting work. And just seven months into the program, the Residents are already making an impact in the research field.

To date, Google Brain Residents have submitted a total of 21 papers to leading machine learning conferences, spanning topics from enhancing low resolution images to building neural networks that in turn design novel, task specific neural network architectures. Of those 21 papers, 5 were accepted in the recent BayLearn Conference (two of which, “Mean Field Neural Networks” and “Regularizing Neural Networks by Penalizing Their Output Distribution’’, were presented in oral sessions), 2 were accepted in the NIPS 2016 Adversarial Training workshop, and another in ISMIR 2016 (see the full list of papers, including the 14 submissions to ICLR 2017, after the figures below).

|

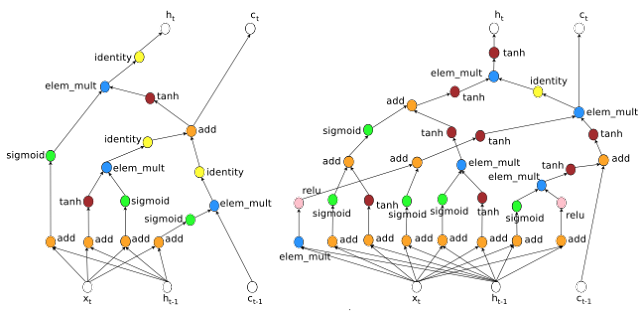

| An LSTM Cell (Left) and a state of the art RNN Cell found using a neural network (Right). This is an example of a novel architecture found using the approach presented in “Neural Architecture Search with Reinforcement Learning” (B. Zoph and Q. V. Le, submitted to ICLR 2017). This paper uses a neural network to generate novel RNN cell architectures that outperform the widely used LSTM on a variety of different tasks. |

|

| The training accuracy for neural networks, colored from black (random chance) to red (high accuracy). Overlaid in white dashed lines are the theoretical predictions showing the boundary between trainable and untrainable networks. (a) Networks with no dropout. (b)-(d) Networks with dropout rates of 0.01, 0.02, 0.06 respectively. This research explores whether theoretical calculations can replace large hyperparameter searches. For more details, read “Deep Information Propagation” (S. S. Schoenholz, J. Gilmer, S. Ganguli, J. Sohl-Dickstein, submitted to ICLR 2017). |

Accepted conference papers (Google Brain Residents marked with asterisks)

Unrolled Generative Adversarial Networks

Luke Metz*, Ben Poole, David Pfau, Jascha Sohl-Dickstein

NIPS 2016 Adversarial Training Workshop (oral presentation)

Conditional Image Synthesis with Auxiliary Classifier GANs

Augustus Odena*, Chris Olah, Jon Shlens

NIPS 2016 Adversarial Training Workshop (oral presentation)

Regularizing Neural Networks by Penalizing Their Output Distribution

Gabriel Pereyra*, George Tucker, Lukasz Kaiser, Geoff Hinton

BayLearn 2016 (oral presentation)

Mean Field Neural Networks

Samuel S. Schoenholz*, Justin Gilmer*, Jascha Sohl-Dickstein

BayLearn 2016 (oral presentation)

Learning to Remember

Aurko Roy, Ofir Nachum*, Łukasz Kaiser, Samy Bengio

BayLearn 2016 (poster session)

Towards Generating Higher Resolution Images with Generative Adversarial Networks

Augustus Odena*, Jonathon Shlens

BayLearn 2016 (poster session)

Multi-Task Convolutional Music Models

Diego Ardila, Cinjon Resnick*, Adam Roberts, Douglas Eck

BayLearn 2016 (poster session)

Audio DeepDream: Optimizing Raw Audio With Convolutional Networks

Diego Ardila, Cinjon Resnick*, Adam Roberts, Douglas Eck

ISMIR 2016 (poster session)

Papers under review (Google Brain Residents marked with asterisks)

Learning to Remember Rare Events

Lukasz Kaiser, Ofir Nachum*, Aurko Roy, Samy Bengio

Submitted to ICLR 2017

Neural Combinatorial Optimization with Reinforcement Learning

Irwan Bello*, Hieu Pham*, Quoc V. Le, Mohammad Norouzi, Samy Bengio

Submitted to ICLR 2017

HyperNetworks

David Ha*, Andrew Dai, Quoc V. Le

Submitted to ICLR 2017

Outrageously Large Neural Networks: The Sparsely-Gated Mixture-of-Experts Layer

Noam Shazeer, Azalia Mirhoseini*, Krzysztof Maziarz, Quoc Le, Jeff Dean

Submitted to ICLR 2017

Neural Architecture Search with Reinforcement Learning

Barret Zoph* and Quoc Le

Submitted to ICLR 2017

Deep Information Propagation

Samuel Schoenholz*, Justin Gilmer*, Surya Ganguli, Jascha Sohl-Dickstein

Submitted to ICLR 2017

Capacity and Trainability in Recurrent Neural Networks

Jasmine Collins*, Jascha Sohl-Dickstein, David Sussillo

Submitted to ICLR 2017

Unrolled Generative Adversarial Networks

Luke Metz*, Ben Poole, David Pfau, Jascha Sohl-Dickstein

Submitted to ICLR 2017

Conditional Image Synthesis with Auxiliary Classifier GANs

Augustus Odena*, Chris Olah, Jon Shlens

Submitted to ICLR 2017

Generating Long and Diverse Responses with Neural Conversation Models

Louis Shao, Stephan Gouws, Denny Britz*, Anna Goldie, Brian Strope, Ray Kurzweil

Submitted to ICLR 2017

Intelligible Language Modeling with Input Switched Affine Networks

Jakob Foerster, Justin Gilmer*, Jan Chorowski, Jascha Sohl-dickstein, David Sussillo

Submitted to ICLR 2017

Regularizing Neural Networks by Penalizing Confident Output Distributions

Gabriel Pereyra*, George Tucker*, Jan Chorowski, Lukasz Kaiser, Geoffrey Hinton

Submitted to ICLR 2017

Unsupervised Perceptual Rewards for Imitation Learning

Pierre Sermanet, Kelvin Xu*, Sergey Levine

Submitted to ICLR 2017

Improving policy gradient by exploring under-appreciated rewards

Ofir Nachum*, Mohammad Norouzi, Dale Schuurmans

Submitted to ICLR 2017

Protein Secondary Structure Prediction Using Deep Multi-scale Convolutional Neural Networks and Next-Step Conditioning

Akosua Busia*, Jasmine Collins*, Navdeep Jaitly

The diverse and collaborative atmosphere fostered by the Brain team has resulted in a group of researchers making great strides on a wide range of research areas which we are excited to share with the broader community. We look forward to even more innovative research that is yet to be done from our 2016 residents, and are excited for the program to continue into it’s second year!

We are currently accepting applications for the 2017 Google Brain Residency Program. To learn more about the program and to submit your application, visit g.co/brainresidency. Applications close January 13th, 2017.

-

Labels:

- Machine Intelligence

Quick links

×

❮

❯