Building better AI benchmarks: How many raters are enough?

March 31, 2026

Flip Korn and Chris Welty, Research Scientists, Google Research

We introduce an evaluation framework for ML models, based on “gold” ratings data, that optimizes the trade-off between the number of items and raters per item, providing a roadmap for building highly reproducible AI benchmarks that capture the nuance of human disagreement.

Quick links

In machine learning, reproducibility measures how easy it is to repeat the same experiments — using the same code, data/distribution, and settings — and get the same results. A high level of reproducibility enables trust between teams and allows them to build on each other’s progress.

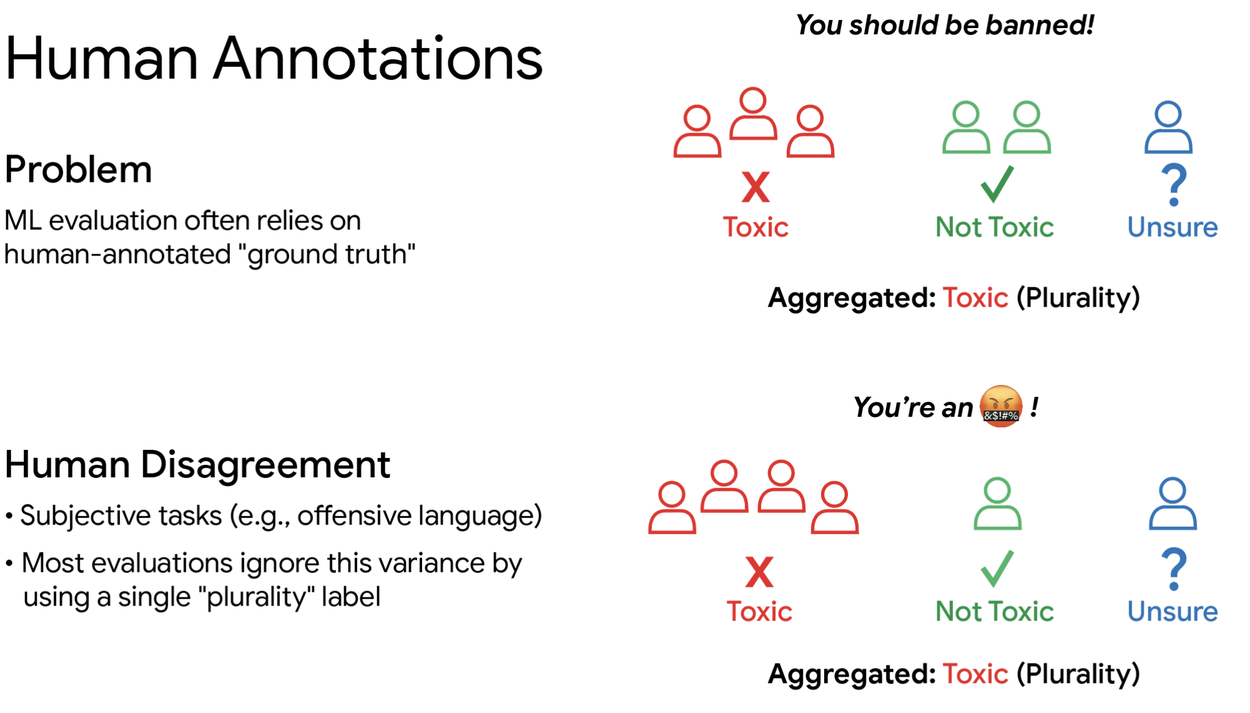

The challenge with reproducibility is that ground truth data usually relies on humans; and humans, unlike machines, approach all problems from a variety of perspectives and often disagree on the result. Surprisingly little research has studied the impact of effectively ignoring human disagreement, which is a common oversight in AI benchmarking. One reason for the lack of research is that budgets for collecting human-backed evaluation data are limited, and obtaining more samples from multiple raters for each example greatly increases the per-item annotation costs.

Using plurality to represent multiple ratings ignores the variation. Both examples above have the same plurality but the latter is more clearly leaning towards "Toxic".

In “Forest vs Tree: The (N,K) Trade-off in Reproducible ML Evaluation,” we investigate the reproducibility trade-off between the ratio of items being rated to the number of human raters per item. Is it better to have fewer raters for many items or many raters for fewer items? Think of this as a question between breadth and depth. The breadth (i.e., the forest) approach asks 1,000 different people to each try one meal at a restaurant to get an overall sense of quality. The depth (tree) approach asks 20 people to try the same 50 meals, revealing more about specific dishes, which might influence the overall rating.

Historically, AI evaluation has leaned toward the forest approach. Most researchers settle for 1 to 5 raters per item, assuming this is enough to find a single "correct" truth. Our research suggests this standard is often insufficient at capturing natural disagreement, and we provide a roadmap for building more reliable and cost efficient AI benchmarks.

The experiment: Simulating the budget

Subjectivity undermining empirical benchmarking is the primary challenge to reproducibility. If two different researchers run the same evaluation and get different results, the research isn't reproducible. To find the optimal balance between the number of items being rated and the number of raters per item, we developed a simulator based on real-world datasets that involve subjective tasks like toxicity and hate speech detection.

We essentially conducted a massive "stress test" to find the most efficient way to spend a given research budget (e.g., measured in cost, time, etc.). We changed two main levers to see which yielded the most reliable results:

- The Scale (N): Total number of items being rated (ranging from a small budget of 100 to a large budget of 50,000).

- The Crowd (K): How many people look at the same item (ranging from 1 person to a crowd of 500).

We used a simulator to test thousands of such combinations across various scales to see which configurations were the most statistically reliable (with p < 0.05) — and thus reproducible.

Flow chart for our evaluation framework for comparing ML models A and B in relation to “gold” labels.

To support the broader community, we have open-sourced this simulator on GitHub.

Datasets

We use multiple datasets, each comprising various categories with several responses per item:

- The Toxicity dataset consists of 107,620 social media comments labeled by 17,280 raters.

- The DICES Diversity in Conversational AI Evaluation for Safety dataset consists of 350 chatbot conversations rated for safety by 123 raters across 16 safety dimensions.

- D3code is a large crosscultural dataset comprising 4,554 items, each labeled for offensiveness by 4,309 raters from 21 countries and balanced across gender and age.

- Jobs is a collection of 2,000 job-related tweets labeled by 5 raters each. The raters answer 3 questions about each tweet, and the corresponding sets are denoted by JobsQ1/2/3. The categories in JobsQ1/2/3 represent the point of view of job-related information, employment status, and job transition events, respectively.

Using these datasets, we also tested what happens when the data is "messy". For instance, if 99% of emails are spam and only 1% are important (indicating high data skew), does that change the optimal rater distribution (breadth vs. depth)? In addition, we also explored the effect of having more data categories, e.g., toxicity tags, such as toxic, mildly offensive, neutral, etc.

Key findings: One size does not fit all

Our study revealed three major insights that challenge the status quo of machine learning evaluation:

1. The "standard" of 3–5 raters is not enough

Our results show that the common practice of using 1, 3 or 5 raters per item is often insufficient. This “low-rater” approach does not provide enough breadth to see the big picture, nor enough depth to understand the nuance of human opinion. To achieve truly reliable results that reflect human nuance, practitioners often need more than 10 raters per item.

Having more raters per item increases statistical significance as p-value approaches zero. This means we can discard the null hypothesis that models A and B perform equally well, which the simulator ensures is not the case.

2. The metric dictates the strategy

There is no "perfect" ratio. Instead, the optimal trade-off depends entirely on what is being measured:

- Accuracy – The majority vote: If the goal is simply to see if a model matches the "majority vote" of humans, the forest approach is generally better. Adding more items helps more than adding more raters.

- Nuance – The range of opinions: If the full range of human opinion is desired — accounting for the fact that a "maybe" is different from a "yes" — the tree approach is more effective. Increasing the number of raters is the only way to capture the "total variation" of human thought.

3. Efficiency is within reach

The most encouraging finding is that one doesn’t need an infinite budget. We found that by optimizing the ratings-per-item ratio correctly based on the chosen metric, one can achieve highly reproducible results with a modest budget of around 1,000 total annotations. However, choosing the wrong balance can lead to unreliable conclusions, even with an increase in research budget.

Why this matters for the future of AI

This research is vital for the future of reliable AI. For years, the field has operated under a "single truth" paradigm — the idea that for every input, there is one "right" label. But even when there’s a single ground-truth it may not be possible to measure it. And as AI moves into more subjective areas like ethics, identifying subjective concepts like harmful intent or the character of social interaction, that paradigm breaks down.

By moving away from the “forest" and embracing the “tree", we can build benchmarks that actually reflect the complexity and different perspectives that lead to the natural disagreement found in the human world. This roadmap allows practitioners to design better, more reproducible tests without overspending. Ultimately, understanding why humans disagree is just as important as knowing where they agree, and our research provides the mathematical tools to capture both.

Acknowledgements

This work owes much credit to our collaborators PhD student Deepak Pandita and Prof. Christopher Homan at RIT.