Security, privacy and abuse

Software engineering and programming language researchers at Google study all aspects of the software development process, from the engineers who make software to the languages and tools that they use.

About the team

Our team brings together experts from systems, networking, cryptography, machine learning, human-computer interaction, and user experience to advance the state of security, privacy, and abuse research. Our mission is straightforward:

Make the world's information trustworthy and safe. We strive to keep all information on the Internet free of deceptive, fraudulent, unwanted, and malicious content. Users should never be at risk for sharing personal data, accessing content, or conducting business on the Internet.

Defend users, everywhere. Internet users can face incredibly diverse threats, from governments to cybercrime and sexual abuse. We approach research, design, and product development with the goal of protecting every user, no matter their needs, from the start.

Advance the state of the art. Making the Internet safer requires support from the public, academia, and industry. We foster initiatives that shape the direction of security, privacy, and abuse research for the next generation.

Build privacy for everyone. It's a responsibility that comes with creating products and services that are free and accessible for all. This is especially important as technology progresses and privacy needs evolve. We look to these principles to guide our products, our processes, and our people in keeping our users' data private, safe, and secure.

Team focus summaries

Our crawlers and analysis engines represent the state of the art for detecting malware, unwanted software, deception, and targeted attacks. These threats span the web, binaries, extensions, and mobile applications.

We continue to develop one of the most sophisticated machine learning and reputation systems to protect users from scams, hate and harassment, and sexual abuse.

We are pioneering advancements in browser, mobile, and cloud security including sandboxing, auto-updates, fuzzing, program analysis, formal verification, and vulnerability research.

Our team of warrior scientists actively protects users and businesses from payment fraud, invalid traffic, denial of service, and bot automation. We fight all things fake—installs, clicks, likes, views, and accounts.

We are re-defining informed user decision making. Our work spans warnings, notifications, and advice. This includes informing our designs based on the privacy attitudes, personas, and segmentation across Internet users.

We are developing the next generation of mechanisms for encryption (e.g., end-to-end & post-quantum) authentication, and privacy-preserving analytics. This includes advanced modeling to detect account hijacking.

Featured publications

Highlighted work

-

Inside "MOAR TLS:" How We Think about Encouraging External HTTPS adoption on the WebThis project is guiding the web towards HTTPS everywhere by methodically hunting and addressing major hurdles for TLS adoption.

Inside "MOAR TLS:" How We Think about Encouraging External HTTPS adoption on the WebThis project is guiding the web towards HTTPS everywhere by methodically hunting and addressing major hurdles for TLS adoption. -

Announcing the first SHA1 collisionIn collaboration with the Cryptology Group at Centrum Wiskunde & Informatica (CWI) - the national research institute for mathematics and computer science in the Netherlands we have broken SHA-1 in practice.

Announcing the first SHA1 collisionIn collaboration with the Cryptology Group at Centrum Wiskunde & Informatica (CWI) - the national research institute for mathematics and computer science in the Netherlands we have broken SHA-1 in practice. -

Meltdown and SpectreLearn how Google’s Project Zero team discovered serious security flaws caused by “speculative execution,” a technique used by most modern processors (CPUs) to optimize performance. Independent researchers separately discovered and named these vulnerabilities “Spectre” and “Meltdown.”

Meltdown and SpectreLearn how Google’s Project Zero team discovered serious security flaws caused by “speculative execution,” a technique used by most modern processors (CPUs) to optimize performance. Independent researchers separately discovered and named these vulnerabilities “Spectre” and “Meltdown.” -

Keeping 2 billion Android devices safe with machine learningGoogle Play Protect's suite of mobile threat protections are built into more than 2 billion Android devices, automatically taking action in the background. We're constantly updating these protections so you don't have to think about security: it just happens.

Keeping 2 billion Android devices safe with machine learningGoogle Play Protect's suite of mobile threat protections are built into more than 2 billion Android devices, automatically taking action in the background. We're constantly updating these protections so you don't have to think about security: it just happens. -

Insider attack resistanceWe are making the encryption on Google Pixel 2 devices resistant to various levels of attack—from platform, to hardware, all the way to the people who create the signing keys for Pixel devices.

Insider attack resistanceWe are making the encryption on Google Pixel 2 devices resistant to various levels of attack—from platform, to hardware, all the way to the people who create the signing keys for Pixel devices. -

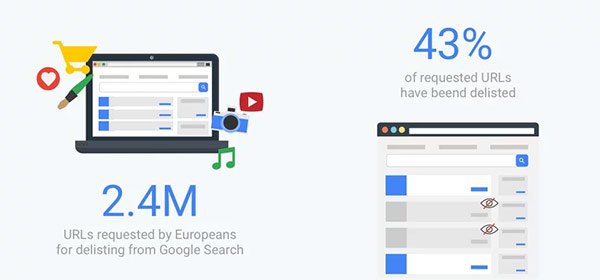

Updating our “right to be forgotten” Transparency ReportWe expanded the scope of our transparency reporting about the “right to be forgotten” and added new data going back to January 2016. Our goal with this project was to inform an ongoing discussion about the interplay between the right to privacy and the right to access lawful information online.

Updating our “right to be forgotten” Transparency ReportWe expanded the scope of our transparency reporting about the “right to be forgotten” and added new data going back to January 2016. Our goal with this project was to inform an ongoing discussion about the interplay between the right to privacy and the right to access lawful information online. -

LocoMocoSec 2018Emily Schechter talks about "The Trouble with URLs, and how Humans (Don't) Understand Site Identity"

LocoMocoSec 2018Emily Schechter talks about "The Trouble with URLs, and how Humans (Don't) Understand Site Identity"

Some of our locations

Some of our people

-

Sunny Consolvo

- Human-Computer Interaction and Visualization

- Security, Privacy and Abuse Prevention

-

Adrienne Porter Felt

- Human-Computer Interaction and Visualization

- Software Engineering

- Security, Privacy and Abuse Prevention

-

Billy Lau

- Data Management

- Hardware and Architecture

- Mobile Systems

- +3 more

-

Ben Laurie

- Software Engineering

- Software Systems

- Security, Privacy and Abuse Prevention

-

Petros Maniatis

- Machine Intelligence

- Mobile Systems

- Software Engineering

- +2 more

-

Panayiotis Mavrommatis

- Security, Privacy and Abuse Prevention

-

Moheeb Abu Rajab

- Responsible AI

- Security, Privacy and Abuse Prevention

-

Robert W. Reeder

- Human-Computer Interaction and Visualization

- Security, Privacy and Abuse Prevention

-

Nina Taft

- Human-Computer Interaction and Visualization

- Networking

- Security, Privacy and Abuse Prevention

-

Kurt Thomas

- Security, Privacy and Abuse Prevention

-

Elie Bursztein

- Data Mining and Modeling

- General Science

- Human-Computer Interaction and Visualization

- +2 more

-

Rene Mayrhofer

- Distributed Systems and Parallel Computing

- Mobile Systems

- Networking

- +2 more