Solving the minimum cut problem for undirected graphs

April 16, 2024

Di Wang, Research Scientist, Google Research

Quick links

A graph is a ubiquitous data structure used in computer science that consists of nodes (or vertices) and edges between pairs of nodes to capture objects and their relations. The minimum cut problem (often referred to as “min-cut”) is a basic structural question about the connectivity of a graph that asks: what is the least expensive way to disconnect a network? More formally, given an input graph where edges have no orientation (i.e., the graph is undirected) and are associated with positive weights quantifying the importance of the edges (e.g., capacity of a road, or strength of a relationship, level of similarity between the endpoints, etc.), a cut is a partition of the nodes into two sides. The size of a cut is the total weight of edges connecting nodes on different sides of the cut, and the min-cut problem is to find a cut of the minimum size.

Solving it efficiently has been one of the most fundamental problems in algorithmic graph theory. Moreover, min-cut has diverse applications in practice such as image restoration, stereo and segmentation in computer vision, and network resilience analysis (such as for roads or power grids). It is also generally very useful when the underlying graph data is too large and needs to be partitioned into smaller components to be processed in a divide-and-conquer manner.

In the theory of algorithm design, the asymptotic complexity for any problem that requires reading the entire input (which is the case for min-cut) is at least linear in the size of the input (since that is the time needed to read the input). A nearly-linear time algorithm essentially achieves this lower-bound, and thus is canonically viewed as the optimal result one can achieve. For the min-cut problem, existing nearly-linear time algorithms are either randomized (which may output an incorrect answer with some probability) or only work for the special case when the graph is simple (which cannot model many real-world applications), so its optimal complexity remains an open problem.

In “Deterministic Near-Linear Time Minimum Cut in Weighted Graphs”, which co-won the best paper award at the ACM-SIAM Symposium on Discrete Algorithms (SODA2024), we design the first nearly-linear algorithm for the min-cut problem that is deterministic (i.e., always finds the correct answer) and that also works for general graphs, thus settling the optimal complexity for the min-cut problem.

Technical insights

Our result is the culmination of a long line of research, and algorithmic advances on this problem (including ours) are usually motivated by structural discoveries of graph connectivity. In particular, a seminal result by Karger in 1996 gave a nearly-linear time randomized algorithm that finds a min-cut with high probability, and a critical insight from that work was the existence of a much smaller graph that largely preserves all cuts’ size. This is useful since one can afford to run a slower algorithm with the smaller graph as input, and the slower running time (in terms of the size of the smaller graph) can still be nearly-linear in the size of the original (larger) graph. Indeed, many of the structural discoveries on the min-cut problem are along this direction, and the high-level idea of reducing problem size while preserving structures of interest has been widely impactful in algorithm design.

Cut-preserving graph sparsification

We start by discussing the structural insight used by Karger in more detail. Starting with a graph G with n nodes, the cut-preserving sparsification by Benczúr and Karger established the existence of a sparse weighted graph G’ on the same set of nodes with a smaller number of edges such that with high probability, every cut S’s size in G’ is roughly the same as its size in G. This idea is illustrated below, where the original graph consists of two complete graphs connected by a single edge (i.e., the dumbbell graph), and the sparsified graph has fewer, but larger weight, edges, while all the cut sizes are approximately preserved.

Illustration of the cut preserving graph sparsification.

To algorithmically construct such a sparser graph, Benczúr and Karger used the approach of sampling edges independently, where each edge in G is included in G’ with some probability, and its weight in G’ is scaled up by the reciprocal of the sampling probability (e.g., an edge of original weight 1 in G would have a weight of 10 in G’ if it’s included with a 10% chance). It turns out that with high probability, this remarkably simple (and nearly-linear time) method can successfully construct a cut-preserving graph sparsification.

The cut-preserving graph sparsification, along with several other creative algorithmic ideas, yielded Karger's breakthrough result. However, Karger’s algorithm is a Monte Carlo algorithm, i.e., the output may be incorrect with (small) probability, and there is no known way to tell if the output is correct other than comparing it with an actual known min-cut. Since then, researchers have been on the quest to resolve the open question of a nearly-linear time deterministic algorithm. In particular, the construction of the cut-preserving graph sparsification is the only component in Karger’s algorithm that is randomized, and an apparent recipe is to find a deterministic construction (a.k.a. derandomization) of the sparsification in nearly-linear time.

In 2015, Kawarabayashi and Thorup achieved a major milestone with such a deterministic nearly-linear time algorithm for simple graphs, i.e., graphs that have at most one edge between every pair of nodes and all edge weights equal to 1. The key observation in that work is a connection between min-cut and another important graph structure known as a low-conductance cut (explained below). This connection also turned out to be critical in later efforts to derandomize Karger’s algorithm on graphs of general edge weights, which eventually culminated in our result.

Alignment of min-cut and low-conductance cut

The conductance of a cut S is defined as the ratio of the cut size of S over the volume of S (assuming S is the smaller volume side of the cut and is non-empty), where the volume of S is the sum of the degree of the nodes in S. A cut S of low conductance intuitively captures a bottleneck in a network, as there is only a small number of edges (relative to its volume) connecting S to the rest of the graph. The conductance of a graph is defined as the min conductance of any cut in the graph, and a graph of large conductance (a.k.a. an expander graph) is considered well-connected as there is no bottleneck inside.

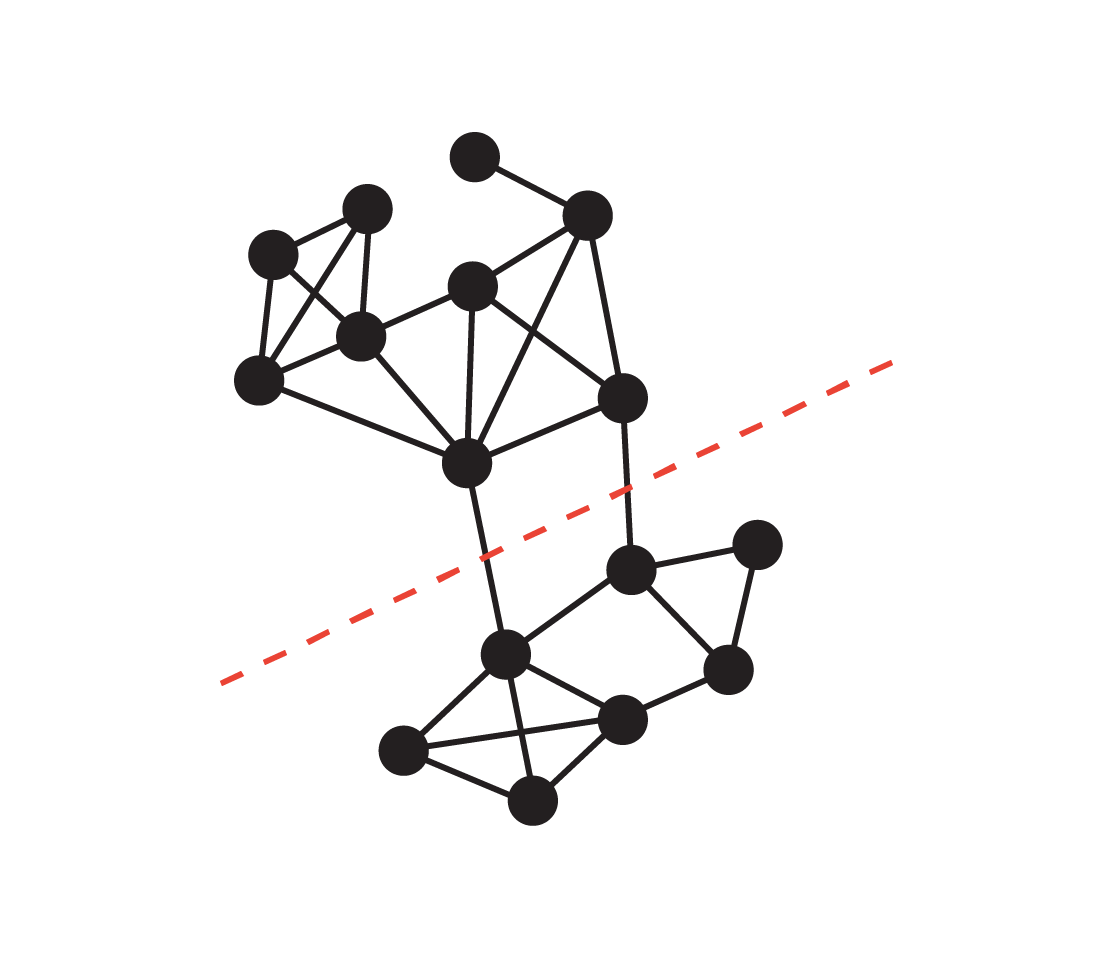

The cut represented by the red dotted line has size 2, and the smaller side (the bottom) has volume 24, so its conductance is 1/12, which is also the graph’s conductance.

Kawayabarashi and Thorup made the observation that any non-trivial (i.e., both sides have at least two nodes) min-cut must have low conductance in a simple graph where the min node degree is large. Following this observation, if one can partition the graph into well-connected clusters, the partitioning must be consistent with every non-trivial min-cut in the sense that each cluster must lie entirely on one side of every such cut. One can then contract each cluster into a single node, and work on the smaller graph where all non-trivial min-cuts of the original graph are intact.

However, for weighted graphs the same observation no longer holds, and the same partitioning used in the simple graph case may not be exactly consistent with non-trivial min-cuts. Nonetheless, Li 2021 observed that such a partitioning is still approximately consistent with non-trivial min-cuts as illustrated in the figure below. In particular, for a non-trivial min-cut S, there exists a cut S’ that is not too different from S such that S’ is consistent with the clusters. Li further observed that this property of the partitioning can be exploited to efficiently derandomize the construction of cut-preserving graph sparsification.

A partitioning of the graph that is approximately consistent with near-minimum cuts.

In our new result, we devise an algorithm to construct such a partitioning tailored to our use case of finding min-cut. Compared to the more generic off-the-shelf method used by Li in the previous work, our tailored construction is much more precise, so that the original min-cut S and its corresponding cluster-consistent cut S’ (in the figure above) are guaranteed to have more similar cut sizes. Moreover, our algorithm is faster than off-the-shelf methods, which comes by improving previous clustering techniques developed solely for simple graphs (by Henzinger, Rao and Wang in 2017) to work more broadly on weighted graphs. The stronger precision and running time guarantees achieved by the new construction ultimately lead to our nearly-linear time deterministic algorithm for the min-cut problem.

Acknowledgements

We are grateful to our co-authors Monika Henzinger, Jason Li, and Satish Rao. We would also like to extend special thanks to John Guilyard for creating the animation used in this post.

-

Labels:

- Algorithms & Theory